Stochastic programming: Difference between revisions

| Line 140: | Line 140: | ||

We should note that in this case, as <math>x \ge 0</math>, <math>d \ge 0</math> and <math>y_1, y_2 \ge 0</math>, the second stage is always feasible, which means <math> x \in K_2</math> always hold true. So we could skip the feasibility cuts (step 2) in all iterations. The <em>L</em>-shaped method iterations are shown below: | We should note that in this case, as <math>x \ge 0</math>, <math>d \ge 0</math> and <math>y_1, y_2 \ge 0</math>, the second stage is always feasible, which means <math> x \in K_2</math> always hold true. So we could skip the feasibility cuts (step 2) in all iterations. The <em>L</em>-shaped method iterations are shown below: | ||

''' Iteration 1:''' | |||

''' Iteration 1:''' | |||

<em>Step 1.</em> With no <math>\theta </math>, the master program is <math>z = min\{10x_1 + 15x_2 | x_1 + x_2 \le 12, x_1 \ge 4, x_2 \ge 2\}</math>. Result is <math>x^1 = (4, 2)^T</math>. Set <math>\theta ^1 = -\infty </math>. | <em>Step 1.</em> With no <math>\theta </math>, the master program is <math>z = min\{10x_1 + 15x_2 | x_1 + x_2 \le 12, x_1 \ge 4, x_2 \ge 2\}</math>. Result is <math>x^1 = (4, 2)^T</math>. Set <math>\theta ^1 = -\infty </math>. | ||

| Line 165: | Line 166: | ||

: Thus, <math>w^1 = -5.2 - (8.352, 18.048) \cdot x^1 = -74.704 > \theta ^1 = -\infty </math>, we add the cut | : Thus, <math>w^1 = -5.2 - (8.352, 18.048) \cdot x^1 = -74.704 > \theta ^1 = -\infty </math>, we add the cut | ||

:<math>8.352x_1 + 18.048x_2 + \theta \ge -5.2</math> | :<math>8.352x_1 + 18.048x_2 + \theta \ge -5.2</math> | ||

''' Iteration 2:''' | ''' Iteration 2:''' | ||

<em>Step 1.</em> The master program is <math>z = min\{10x_1 + 15x_2 + \theta | x_1 + x_2 \le 12, x_1 \ge 4, x_2 \ge 2, 8.352x_1 + 18.048x_2 + \theta \ge -5.2\}</math>. Result is <math>z = -22.992, x^2 = (4, 8)^T, \theta ^2 = -182.992</math>. | <em>Step 1.</em> The master program is <math>z = min\{10x_1 + 15x_2 + \theta | x_1 + x_2 \le 12, x_1 \ge 4, x_2 \ge 2, 8.352x_1 + 18.048x_2 + \theta \ge -5.2\}</math>. Result is <math>z = -22.992, x^2 = (4, 8)^T, \theta ^2 = -182.992</math>. | ||

| Line 192: | Line 195: | ||

''' Iteration 3:''' | ''' Iteration 3:''' | ||

<em>Step 1.</em> The master program is <math>z = min\{10x_1 + 15x_2 + \theta | x_1 + x_2 \le 12, x_1 \ge 4, x_2 \ge 2, 8.352x_1 + 18.048x_2 + \theta \ge -5.2, 21.12x_1 + \theta \ge -15.84\}</math>. Result is <math>z = -10.39375, x^3 = (6.6828, 5.3172)^T, \theta ^3 = -156.97994</math>. | <em>Step 1.</em> The master program is <math>z = min\{10x_1 + 15x_2 + \theta | x_1 + x_2 \le 12, x_1 \ge 4, x_2 \ge 2, 8.352x_1 + 18.048x_2 + \theta \ge -5.2, 21.12x_1 + \theta \ge -15.84\}</math>. Result is <math>z = -10.39375, x^3 = (6.6828, 5.3172)^T, \theta ^3 = -156.97994</math>. | ||

| Line 217: | Line 221: | ||

''' Iteration 4:''' | ''' Iteration 4:''' | ||

<em>Step 1.</em> The master program is <math>z = min\{10x_1 + 15x_2 + \theta | x_1 + x_2 \le 12, x_1 \ge 4, x_2 \ge 2, 8.352x_1 + 18.048x_2 + \theta \ge -5.2, 21.12x_1 + \theta \ge -15.84, 11.52x_1 + 9.6x_2 + \theta \ge -21.04\}</math>. Result is <math>z = -8.895, x^4 = (4, 3.375)^T, \theta ^4 = -99.52</math>. | <em>Step 1.</em> The master program is <math>z = min\{10x_1 + 15x_2 + \theta | x_1 + x_2 \le 12, x_1 \ge 4, x_2 \ge 2, 8.352x_1 + 18.048x_2 + \theta \ge -5.2, 21.12x_1 + \theta \ge -15.84, 11.52x_1 + 9.6x_2 + \theta \ge -21.04\}</math>. Result is <math>z = -8.895, x^4 = (4, 3.375)^T, \theta ^4 = -99.52</math>. | ||

| Line 240: | Line 245: | ||

''' Iteration 5:''' | ''' Iteration 5:''' | ||

<em>Step 1.</em> The master program is <math>z = min\{10x_1 + 15x_2 + \theta | x_1 + x_2 \le 12, x_1 \ge 4, x_2 \ge 2, 8.352x_1 + 18.048x_2 + \theta \ge -5.2, 21.12x_1 + \theta \ge -15.84, 11.52x_1 + 9.6x_2 + \theta \ge -21.04, 13.344x_1 + 13.056x_2 + \theta \ge 0\}</math>. Result is <math>z = -8.5583, x^5 = (4.6667, 3.625)^T, \theta ^5 = -109.6</math>. | <em>Step 1.</em> The master program is <math>z = min\{10x_1 + 15x_2 + \theta | x_1 + x_2 \le 12, x_1 \ge 4, x_2 \ge 2, 8.352x_1 + 18.048x_2 + \theta \ge -5.2, 21.12x_1 + \theta \ge -15.84, 11.52x_1 + 9.6x_2 + \theta \ge -21.04, 13.344x_1 + 13.056x_2 + \theta \ge 0\}</math>. Result is <math>z = -8.5583, x^5 = (4.6667, 3.625)^T, \theta ^5 = -109.6</math>. | ||

Revision as of 22:56, 15 December 2021

Authors: Roohi Menon, Hangyu Zhou, Gerald Ogbonna, Vikram Raghavan (SYSEN 6800 Fall 2021)

Introduction

Stochastic Programming, also referred to as Stochastic Optimization, is a mathematical framework to help decision-making processes under uncertainty. [2] With uncertainties being widespread, Stochastic Programming is a risk-neutral mathematical framework that finds application in areas, such as process systems engineering. [3] In process engineering, uncertainties are related to prices, purity of raw materials, customer demands, and yields of pilot reactors, among others. Batch processing has widely been adopted across process industries, and for the stated manufacturing process production scheduling is one of the most crucial decisions to be taken. In a deterministic optimization model, parameters, such as the process of raw materials, availability of raw materials, price of different products, operation time and cost, and order demands are considered without factoring in uncertainty. However, such assumptions are not realistic because uncertainty doesn’t come with forewarning. Hence, uncertainty needs to be factored in always. [4]

In conventional robust optimization, the assumption is made that all decision variables are realized before the realization of uncertainty. Such an approach makes the conventional robust optimization problem overly conservative. [4] Moreover, in manufacturing, not all decisions need to be made “in-the-present,” a stagnated approach to decision making [two-stage approach] can also be adopted. Going beyond, the Stochastic Programming framework can also be applied to a variety of problems across sectors, such as electricity generation, financial planning, supply chain management, mitigation of climate change, and pollution control. [3] The field has evolved from deterministic linear programming by introducing random variables. [5]

Theory, methodology, and/or algorithmic discussion

Two-Stage Stochastic Linear Program with Fixed Recourse:

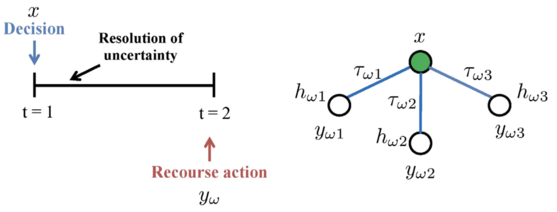

Modeling through stochastic programming is often adopted because of its proactive-reactive decision-making feature to address uncertainty. [2] Two-stage stochastic programming (TSP) is helpful when a problem requires the analysis of policy scenarios, however, the associated system information is inherently characterized with uncertainty. [3] In a typical TSP, decision variables of an optimization problem under uncertainty are categorized into two periods. Decisions regarding the first-stage variables need to be made before the realization of the uncertain parameters. The first-stage decision variables are proactive for they hedge against uncertainty and ensure that the solution performs well under all uncertainty realizations. [2] Once the uncertain events have unfolded/realized, it is possible to further design improvements or make operational adjustments through the values of the second-stage variables, also known as recourse, at a given cost. The second-stage variables are reactive in their response to the observed realization of uncertainty. [2] Thus, optimal decisions should be made on data that is available at the time the decision is being made. In such a setup, future observations are not taken into consideration. [6] Two-stage stochastic programming is suited for problems with a hierarchical structure, such as integrated process design, and planning and scheduling. [2]

Methodology

The classical two-stage stochastic linear program with fixed recourse [7] is given below:

$ \min z=c^Tx + E_{\xi}[\min q(\omega)^Ty(\omega)] $

$ s.t. \quad Ax = b $

$ \qquad \quad T(\omega)x + Wy(\omega) = h(\omega) $

$ \qquad \quad x \ge 0, y(\omega) \ge 0 $

Where $ c $ is a known vector in $ \mathbb{R}^{n_1} $, $ b $ is a known vector in $ \mathbb{R}^{m_1} $. $ A $ and $ W $ are known matrices of size $ m_1 \times n_1 $ and $ m_2 \times n_2 $ respectively. $ W $ is known as the recourse matrix.

The first-stage decisions are represented by the $ n_1 \times 1 $ vector $ x $. Corresponding to $ x $ are the first-stage vectors and matrices $ c $, $ b $, and $ A $. In the second stage, a number of random events $ \omega \in \Omega $ may realize. For a given realization $ \omega $, the second-stage problem data $ q(\omega) $, $ h(\omega) $, and $ T(\omega) $ become known. [8]

Algorithm discussion

To solve problems related to Two-Stage Linear Stochastic Programming more effectively, algorithms such as Benders decomposition or the Lagrangean decomposition can be used. Benders decomposition was presented by J.F Benders, and is a decomposition method used widely to solve mixed-integer problems. The algorithm is based on the principle of decomposing the main problem into sub-problems. The master problem is defined with only the first-stage decision variables. Once the first-stage decisions are fixed at an optimal solution of the master problem, thereafter, the subproblems are solved and valid inequalities of x are derived and added to the master problem. On solving the master problem again, the algorithm iterates until the upper and lower bound converge. [3] The Benders master problem is defined as being linear or mixed-integer and having fewer technical constraints, while the sub-problems could be linear or nonlinear in nature. The subproblems’ primary aim is to validate the feasibility of the master problem’s solution. [7] Benders decomposition is also known as L-shaped decomposition because once the first stage variables, $ x $, are fixed then the rest of the problem has a structure of a block-diagonal. This structure can be decomposed by scenario and solved independently. [3]

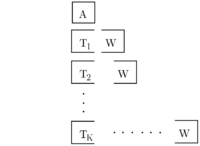

... We assume that the random vector $ \xi $ has finite support. Let $ k=1,\ldots,K $ index possible second stage realizations and let $ p_k $ be the corresponding probabilities. With that, we could write down the deterministic equivalent of the stochastic program. This form is created by associating one set of second-stage decisions ($ y_k $) to each realization of $ \xi $, i.e., to each realization of $ q_k $, $ h_k $, and $ T_k $. This large-scale deterministic counterpart of the original stochastic program is known as the extensive form:

$ \begin{align} \min c^T x + \sum_{k=1}^{K} p_k & q_k^T y_k \\ s.t. \qquad \quad Ax &= b \\ T_kx + W_{y_k} &= h_k, &k=1,...,K \\ x \ge 0, y_k &\ge 0, &k=1,...,K \\ \end{align} $

It is equivalent with the following formulation. The L-shape block structure of this extensive form gives rise to the name, L-shaped method.

$ \begin{array}{lccccccccccccc} \min & c^T x & + & p_1 q_1^T y_1 & + & p_2q_2^T y_2 & + & \cdots & + & p_K q_K^T y_K & & \\ s.t. & Ax & & & & & & & & & = & b \\ & T_1 x & + & W_1 y_1 & & & & & & & = & h_1 \\ & T_2 x & + & & & W_2y_2 & & & & & = & h_2 \\ & \vdots & & & & & & \ddots & & & & \vdots \\ & T_s x & + & & & & & & & W_K y_K & = & h_K \\ & x\ge 0 & , & y_1 \ge 0 & , & y_2 \ge 0 & & \ldots & & y_K \ge 0 \\ \end{array} $

L-Shaped Algorithm

Step 0. Set $ r=s=v=0 $ Step 1. Set $ v = v+1 $. Solve the following linear program (master program)

$ \begin{align} \min z =c^Tx &+ \theta\\ s.t. Ax &=b & & & (1) \\ D_{\ell}x &\ge d_{\ell}, & \ell = 1, \ldots, r & & (2)\\ E_{\ell}x + \theta &\ge e_{\ell}, & \ell = 1, \ldots, s & & (3) \\ x &\ge 0, & \theta \in \mathbb{R} & & (4) \end{align} $

Let $ (x^k, \theta ^k) $ be an optimal solution. If there is no constraint (3), set $ \theta ^k = -\infty $, $ x^k $ is defined by the remaining constraints.

Step 2. For $ k = 1,\ldots,K $ solve the following linear program:

$ \begin{align} \min &w' = e^Tv^+ + e^Tv^- \\ s.t. &Wy + Iv^+ - Iv^- = h_k - T_kx^v \\ &y \ge 0, v^+ \ge 0, v^- \ge 0 \\ \end{align} $

where $ e^T = (1,\ldots,1) $, $ I $ is the identity matrix. Until for some $ k $ the optimal value $ w' > 0 $. In this case, let $ \sigma ^v $ be the associated simplex multipliers and define

$ D_{r+1} = (\sigma ^v)^T T_k $

$ d_{r+1} = (\sigma ^v)^T h_k $

to generate a constraint (called a feasibility cut) of type (2). Set $ r=r+1 $, add the constraint to the constraint set (2), and return to Step 1. If for all $ k $, $ w' = 0 $, go to Step 3.

Step 3: For $ k=1,\ldots,K $ solve the linear program

$ \begin{align} \min &w = q^T_k y \\ s.t. &Wy = h_k - T_k x^v & & (4)\\ &y \ge 0 \end{align} $

Let $ \pi ^v_k $ be the simplex multipliers associated with the optimal solution of Problem $ k $ of type (4). Define

$ E_{s+1} = \sum_{k=1}^K p_k(\pi ^v_k)^T T_k $

$ e_{s+1} = \sum_{k=1}^K p_k(\pi ^v_k)^T h_k $

Let $ w^v = e_{s+1}-E_{s+1}x^v $. If $ \theta ^v \ge w^v $, stop; $ x^v $ is an optimal solution. Otherwise, set $ s = s+1 $, add to the constraint set (3) and return to Step 1.

This method approximates $ \mathcal{L} $ using an outer linearization. This approximation is achieved by the master program (1)-(4). It finds a proposal $ x $, then sent it to the second stage. Two types of constraints are sequentially added: (i) feasibility cuts (2) determining $ {x|\mathcal{L}(x) < +\infty} $ and (ii) optimality cuts (3), which are linear approximations to $ \mathcal{L} $ on its domain of finiteness.

Numerical Example

To illustrate the algorithm mentioned above, let's take a look at the following numerical example.

$ \begin{align} z = \min 10{x_1} + 15&{x_2} + E_\xi (q_1y_1 + q_2y_2) \\ s.t. x_1 + x_2 &\le 12 \\ 6y_1 + 10y_2 &\le 60x_1 \\ 8y_1 + 5y_2 &\le 80x_2 \\ y_1 &\le d_1, y_2 \le d_2 \\ x_1 &\ge 4, x_2 \ge 2 \\ y_1, y_2 &\ge 0 \end{align} $

where $ \xi ^T = (d_1, d_2, q_1, q_2) $ has 0.4 probability to be $ \xi _1 = (50, 10, -2.4, -2.8) $ and 0.6 probability to be $ \xi _2 = (30, 30, -2.8, -3.2) $.

We should note that in this case, as $ x \ge 0 $, $ d \ge 0 $ and $ y_1, y_2 \ge 0 $, the second stage is always feasible, which means $ x \in K_2 $ always hold true. So we could skip the feasibility cuts (step 2) in all iterations. The L-shaped method iterations are shown below:

Iteration 1:

Step 1. With no $ \theta $, the master program is $ z = min\{10x_1 + 15x_2 | x_1 + x_2 \le 12, x_1 \ge 4, x_2 \ge 2\} $. Result is $ x^1 = (4, 2)^T $. Set $ \theta ^1 = -\infty $.

Step 2. No feasibility cut is needed.

Step 3.

- For $ \xi = \xi _1 $, solve:

- $ w = min \{-2.4y_1 - 2.8y_2 | 6y_1 + 10y_2 \le 240, 8y_1 + 5y_2 \le 160, 0\le y_1 \le 50, 0\le y_2 \le 10 \} $

- The solution is $ w_1 = -61, y^T = (13.75, 10), \pi _1^T = (0, -0.3, 0, -1.3) $

- For $ \xi = \xi _2 $, solve:

- $ w = min \{-2.8y_1 - 3.2y_2 | 6y_1 + 10y_2 \le 240, 8y_1 + 5y_2 \le 160, 0\le y_1 \le 30, 0\le y_2 \le 30 \} $

- The solution is $ w_2 = -83.84, y^T = (8, 19.2), \pi _2^T = (-0.232, -0.176, 0, 0) $

- Since $ h_1 = (0, 0, 50, 10)^T, h_2 = (0, 0, 30, 30)^T $, we have

- $ e_1 = 0.4\cdot \pi _1^T \cdot h_1 + 0.6\cdot \pi _2^T \cdot h_2 = 0.4 \times (-13) + 0.6 \times (0) = -5.2 $

- Here, the matrix $ T $ is the same in these two scenarios, which is $ \begin{bmatrix} -60 & 0 \\ 0 & -80 \\ 0 & 0 \\ 0 & 0 \end{bmatrix} $. Therefore, we have

- $ E_1 = 0.4\cdot \pi _1^T \cdot T + 0.6\cdot \pi _2^T \cdot T = 0.4 \times (0, 24) + 0.6 \times (13.92, 14.08) = (8.352, 18.048) $

- Thus, $ w^1 = -5.2 - (8.352, 18.048) \cdot x^1 = -74.704 > \theta ^1 = -\infty $, we add the cut

- $ 8.352x_1 + 18.048x_2 + \theta \ge -5.2 $

Iteration 2:

Step 1. The master program is $ z = min\{10x_1 + 15x_2 + \theta | x_1 + x_2 \le 12, x_1 \ge 4, x_2 \ge 2, 8.352x_1 + 18.048x_2 + \theta \ge -5.2\} $. Result is $ z = -22.992, x^2 = (4, 8)^T, \theta ^2 = -182.992 $.

Step 2. No feasibility cut is needed.

Step 3.

- For $ \xi = \xi _1 $, solve:

- $ w = min \{-2.4y_1 - 2.8y_2 | 6y_1 + 10y_2 \le 240, 8y_1 + 5y_2 \le 640, 0\le y_1 \le 50, 0\le y_2 \le 10 \} $

- The solution is $ w_1 = -96, y^T = (40, 0), \pi _1^T = (-0.4, 0, 0, 0) $

- For $ \xi = \xi _2 $, solve:

- $ w = min \{-2.8y_1 - 3.2y_2 | 6y_1 + 10y_2 \le 240, 8y_1 + 5y_2 \le 640, 0\le y_1 \le 30, 0\le y_2 \le 30 \} $

- The solution is $ w_2 = -103.2, y^T = (30, 6), \pi _2^T = (-0.32, 0, -0.88, 0) $

- Thus,

- $ e_2 = 0.4\cdot \pi _1^T \cdot h_1 + 0.6\cdot \pi _2^T \cdot h_2 = 0.4 \times (0) + 0.6 \times (-26.4) = -15.84 $

- $ E_2 = 0.4\cdot \pi _1^T \cdot T + 0.6\cdot \pi _2^T \cdot T = 0.4 \times (24, 0) + 0.6 \times (19.2, 0) = (21.12, 0) $

- Since $ w_2 = -15.84 - 21.12 \cdot 4 = -100.32 > -182.992 $, add the cut

- $ 21.12x_1 + \theta \ge -15.84 $

Iteration 3:

Step 1. The master program is $ z = min\{10x_1 + 15x_2 + \theta | x_1 + x_2 \le 12, x_1 \ge 4, x_2 \ge 2, 8.352x_1 + 18.048x_2 + \theta \ge -5.2, 21.12x_1 + \theta \ge -15.84\} $. Result is $ z = -10.39375, x^3 = (6.6828, 5.3172)^T, \theta ^3 = -156.97994 $.

Step 2. No feasibility cut is needed.

Step 3.

- For $ \xi = \xi _1 $, solve:

- $ w = min \{-2.4y_1 - 2.8y_2 | 6y_1 + 10y_2 \le 400.968, 8y_1 + 5y_2 \le 425.376, 0\le y_1 \le 50, 0\le y_2 \le 10 \} $

- The solution is $ w_1 = -140.6128, y^T = (46.922, 10), \pi _1^T = (0, -0.3, 0, -1.3) $

- For $ \xi = \xi _2 $, solve:

- $ w = min \{-2.8y_1 - 3.2y_2 | 6y_1 + 10y_2 \le 400.968, 8y_1 + 5y_2 \le 425.376, 0\le y_1 \le 30, 0\le y_2 \le 30 \} $

- The solution is $ w_2 = -154.7098, y^T = (30, 22.0968), \pi _2^T = (-0.32, 0, -0.88, 0) $

- Thus,

- $ e_3 = 0.4\cdot \pi _1^T \cdot h_1 + 0.6\cdot \pi _2^T \cdot h_2 = 0.4 \times (-13) + 0.6 \times (-26.4) = -21.04 $

- $ E_3 = 0.4\cdot \pi _1^T \cdot T + 0.6\cdot \pi _2^T \cdot T = 0.4 \times (0, 24) + 0.6 \times (19.2, 0) = (11.52, 9.6) $

- Since $ w_3 = -21.04 - 11.52 \cdot 6.6828 - 9.6 \cdot 5.3172 = -149.070976 > -156.97994 $, add the cut

- $ 11.52x_1 + 9.6x_2 + \theta \ge -21.04 $

Iteration 4:

Step 1. The master program is $ z = min\{10x_1 + 15x_2 + \theta | x_1 + x_2 \le 12, x_1 \ge 4, x_2 \ge 2, 8.352x_1 + 18.048x_2 + \theta \ge -5.2, 21.12x_1 + \theta \ge -15.84, 11.52x_1 + 9.6x_2 + \theta \ge -21.04\} $. Result is $ z = -8.895, x^4 = (4, 3.375)^T, \theta ^4 = -99.52 $.

Step 2. No feasibility cut is needed.

Step 3.

- For $ \xi = \xi _1 $, solve:

- $ w = min \{-2.4y_1 - 2.8y_2 | 6y_1 + 10y_2 \le 240, 8y_1 + 5y_2 \le 270, 0\le y_1 \le 50, 0\le y_2 \le 10 \} $

- The solution is $ w_1 = -88.8, y^T = (30, 6), \pi _1^T = (-0.208, -0.144, 0, 0) $

- For $ \xi = \xi _2 $, solve:

- $ w = min \{-2.8y_1 - 3.2y_2 | 6y_1 + 10y_2 \le 240, 8y_1 + 5y_2 \le 270, 0\le y_1 \le 30, 0\le y_2 \le 30 \} $

- There are multiple optimal solutions. Selecting one of them, we have

- $ e_4 = 0 $

- $ E_4 = (13.344, 13.056) $

- Since $ w_3 = 0 - 13.344 \cdot 4 - 13.056 \cdot 3.375 = -97.44 > -99.52 $, add the cut

- $ 13.344x_1 + 13.056x_2 + \theta \ge 0 $

Iteration 5:

Step 1. The master program is $ z = min\{10x_1 + 15x_2 + \theta | x_1 + x_2 \le 12, x_1 \ge 4, x_2 \ge 2, 8.352x_1 + 18.048x_2 + \theta \ge -5.2, 21.12x_1 + \theta \ge -15.84, 11.52x_1 + 9.6x_2 + \theta \ge -21.04, 13.344x_1 + 13.056x_2 + \theta \ge 0\} $. Result is $ z = -8.5583, x^5 = (4.6667, 3.625)^T, \theta ^5 = -109.6 $.

Step 2. No feasibility cut is needed.

Step 3.

- For $ \xi = \xi _1 $, solve:

- $ w = min \{-2.4y_1 - 2.8y_2 | 6y_1 + 10y_2 \le 280, 8y_1 + 5y_2 \le 290, 0\le y_1 \le 50, 0\le y_2 \le 10 \} $

- The solution is $ w_1 = -100, y^T = (30, 10), \pi _1^T = (0, -0.3, 0, -1.3) $

- For $ \xi = \xi _2 $, solve:

- $ w = min \{-2.8y_1 - 3.2y_2 | 6y_1 + 10y_2 \le 280, 8y_1 + 5y_2 \le 290, 0\le y_1 \le 30, 0\le y_2 \le 30 \} $

- The solution is $ w_2 = -116, y^T = (30, 10), \pi _2^T = (-0.232, -0.176, 0, 0) $

- Thus,

- $ e_5 = 0.4\cdot \pi _1^T \cdot h_1 + 0.6\cdot \pi _2^T \cdot h_2 = 0.4 \times (-13) + 0.6 \times (0) = -5.2 $

- $ E_5 = 0.4\cdot \pi _1^T \cdot T + 0.6\cdot \pi _2^T \cdot T = 0.4 \times (0, 24) + 0.6 \times (13.92, 14.08) = (8.352, 18.048) $

- Since $ w_5 = -5.2 - 8.352 \cdot 4.6667 - 18.048 \cdot 3.625 = -109.6 = \theta ^5 $, stop.

- $ x_5 = (4.6667, 3.625)^T $ is the optimal solution.

Applications

Apart from the process industry, two-stage linear stochastic programming finds application in other fields as well. For instance, in the optimal design of distributed energy systems, there are various uncertainties that need to be considered. Uncertainty is related to aspects such as demand and supply of energy, economic factors like unit investment cost and energy price, and uncertainty related to technical parameters like efficiency. Zhou et al. [9], developed a two-stage stochastic programming model for the optimal design of a distributed energy system with a stage decomposition-based solution strategy. The authors accounted for both demand and supply uncertainty. They used the genetic algorithm on the first stage variables and the Monte Carlo method on the second-stage variables.

Another application of the two-stage linear stochastic programming is in the bike-sharing system (BSS). The system needs to ensure that bikes are available at all stations per the given demand. To ensure this balance, redistribution trucks are used to transfer bikes from one bike surplus station to a bike deficient station. Such a problem is referred to as the bike repositioning problem (BRS) in the aforementioned system. [10] Another challenge related to BRP is the aspect related to the holding cost of the depot. While transferring bikes from one station to another they could get damaged or be lost in the process which could lead to an imbalance between demand and supply in the BSS. As for bikes that cannot be balanced among the stations of the BSS are either placed back at the depot at increase the holding cost of the depot. To address the stated concerns, Tang et al [10], developed a two-stage stochastic program that would capture the uncertainty related to redistribution of demand within the system. In the first stage, before the realization of redistribution demand, a decision regarding routing was made. In the second stage, decisions regarding loading/unloading at each station and depot are made. Holding cost is incorporated into the model, such that the model’s primary objective is to determine the best routes of the repositioning truck and the optimal loading/unloading quantities at each station and depot. The model is framed to minimize the expected total sum of transportation cost, the penalty cost of all stations, and the holding cost of the depot.

Conclusion

From the previous examples, it is evident that two-stage linear stochastic programming finds applicability across many areas, such as the petrochemical, pharmaceutical industry, carbon capture, and energy storage among others. [3] Stochastic programming can primarily be used to model two types of uncertainties: 1) exogenous uncertainty, which is the most widely considered one, and 2) endogenous uncertainty, where realization regarding uncertainty depends on the decision taken. The main challenge, with respect to stochastic programming, is that the type of problems that can be solved is limited. An ‘ideal’ problem would be multi-stage stochastic mixed-integer nonlinear programming under both exogenous and endogenous uncertainty with an arbitrary probability distribution that is stagewise dependent. [3] However, current algorithms, in terms of development and computation resources, are still limited with respect to the ability to solve the ‘ideal’ problem.

References

- ↑ Li, C., & Grossmann, I. E. (2021). A review of stochastic programming methods for optimization of process systems under uncertainty. Frontiers in Chemical Engineering, 2. https://doi.org/10.3389/fceng.2020.622241

- ↑ 2.0 2.1 2.2 2.3 2.4 “Integration of Scheduling and Dynamic Optimization of Batch Processes under Uncertainty: Two-Stage Stochastic Programming Approach and Enhanced Generalized Benders Decomposition Algorithm,”Yunfei Chu and Fengqi You, Industrial & Engineering Chemistry Research 2013 52 (47), 16851-16869 DOI: 10.1021/ie402621t

- ↑ 3.0 3.1 3.2 3.3 3.4 3.5 3.6 Li Can, Grossmann Ignacio E., “A Review of Stochastic Programming Methods for Optimization of Process Systems Under Uncertainty,” Frontiers in Chemical Engineering 2021, Vol. 2; DOI: 10.3389/fceng.2020.622241

- ↑ 4.0 4.1 “A Tutorial on Stochastic Programming,” Shapiro, Alexander and Philpott, Andy

- ↑ Powell, W. B. (2019). A unified framework for stochastic optimization. European Journal of Operational Research, 275(3), 795-821.

- ↑ Barik, S.K., Biswal, M.P. & Chakravarty, D. Two-stage stochastic programming problems involving interval discrete random variables. OPSEARCH 49, 280–298 (2012). https://doi.org/10.1007/s12597-012-0078-1

- ↑ 7.0 7.1 Soares, J., Canizes, B., Ghazvini, M. A. F., Vale, Z., & Venayagamoorthy, G. K. (2017). Two-stage stochastic model using benders’ decomposition for large-scale energy resource management in smart grids. IEEE Transactions on Industry Applications, 53(6), 5905-5914.

- ↑ 8.0 8.1 Cite error: Invalid

<ref>tag; no text was provided for refs named:10 - ↑ Zhe Zhou, Jianyun Zhang, Pei Liu, Zheng Li, Michael C. Georgiadis, Efstratios N. Pistikopoulos, “A two-stage stochastic programming model for the optimal design of distributed energy systems,” Applied Energy, Volume 103, 2013, Pages 135-144, ISSN 0306-2619, https://doi.org/10.1016/j.apenergy.2012.09.019.

- ↑ 10.0 10.1 Qiong Tang, Zhuo Fu, Dezhi Zhang, Hao Guo, Minyi Li, "Addressing the Bike Repositioning Problem in Bike Sharing System: A Two-Stage Stochastic Programming Model", Scientific Programming, vol. 2020, Article ID 8868892, 12 pages, 2020.https://doi.org/10.1155/2020/8868892