Sparse Reconstruction with Compressed Sensing

Author: Ngoc Ly (SysEn 5800 Fall 2021)

Compressed Sensing (CS)

Compressed Sensing summary here

Compression is synonymous with sparsity. So when we talk about compression we are actually referring to the sparsity. We introduce Compressed Sensing and then focus on reconstruction.

Three big groups of algorithms are:[1]

Optimization methods: includes $ l_1 $ minimization i.e. Basis Pursuit, and quadratically constraint $ l_1 $ minimization i.e. basis pursuit denoising.

greedy include Orthogonal matching pursuit and Compressive Sampling Matching Pursuit (CoSaMP)

thresholding-based methods such as Iterative Hard Thresholding(IHT) and Iterative Soft Thresholding, Approximate IHT or AM-IHT, and many more.

More cutting-edge methods include dynamic programming.

We will cover one, i.e. IHT. WHY IHT THEN? Basis pursuit, matching pursuit type algorithms belong to a more general class of iterative thresholding algorithms. [2] So IHT seems like the ideal place to start. If everything compliment with RIP, then IHT has fast convergence.

Introduction

Notation =

$ x \in \mathbb{R}^N $often not really sparse but approximately sparse

$ \mathbf{x} \in \mathbb{R}^{N} $

$ \Phi \in \mathbb{R}^{M \times N} $for $ M \ll N $ Sensing matrix a Random Gaussian or Bernoulli matrix

$ y \in \mathbb{R}^M $are the observed y samples

$ e \in \mathbb{R}^M $noise vector $ \| e \|_2 \leq \eta $

put defn of p norm here

$ x = \Psi \alpha $ where $ \Psi $ is the sparsifying matrix and $ \alpha $ are coeficients

sub module goal

s.t.

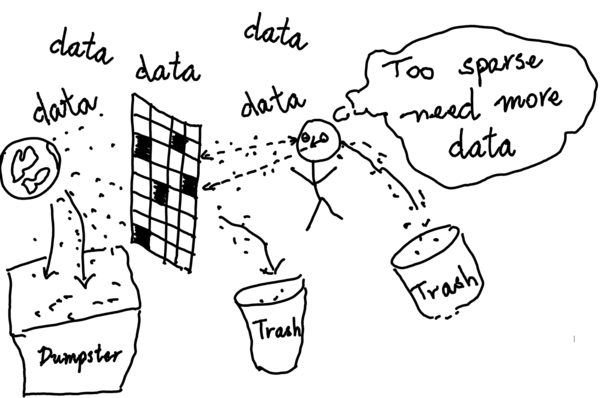

The goal of compressed sensing is to being with the under determined linear system $ y = \Phi x + e $, Where $ \Phi \in \mathbb{R}^{M \times N} $ for $ M \ll N $ How can we reconstruct x from The goal is to reconstruct $ x \in \mathbb{R}^N $given $ y $ and $ \Phi $ Considerably fewer random measurements and reconstruct the original x with high probability from its sparse representation instead of taking a large number of high-resolution measurements and discarding the majority of them. being a haphazard matrix

sub module sparsity

A vector $ x $ is said to be sparse in $ \mathbb{R}^N $, if it has at most $ k $ nonzero coefficients. The set of all k-sparse vectors is denoted by $ \Sigma_k = \{x : \|x\|_0 \leq k \} $. Consequently there is $ \binom{N}{k} $ different subsets of k-sparse vectors. If we draw uniformaly a random k-sparse $ x $ from $ \Sigma_k $ has the entropy$ log \binom{N}{k} \approx k log (N/k) $ bits are needed for compression of $ \Sigma_k $ ~cite(Measurements vs Bits)

The goal is to search for the sparsest $ x \in \Sigma_k $ given the meassurment $ y $ and the constraint matrix $ \Phi $.

This is antiquated to find the $ \min \|x\|_0 $ in the set $ \{ x \in \mathbb{R}^N : \Phi x = y\} $

This searching problem can be formulated to the following $ l_0 $ program

The support of $ \mathbf{x} $ is $ supp(\mathbf{x}) = \{i \in [N] : \mathbf{x}_i \neq 0 \} $ we say $ \mathbf{x} $ is $ k $ sparse when $ |supp(x)| \leq k $

We are interested in the smallest $ supp(x) $ , i.e. $ min(supp(x)) $

sub module zero norm program

$ \mathbf{\hat{s}} = \underset{s}{arg min} \| \mathbf{s}\|_0 \quad s.t. \quad \mathbf{y} = \Phi \mathbf{s} $, which is an combinatorial NP-Hard problem. Hence, if noise is presence the recovery is not stable. [Buraniuk "compressed sensing"]

sub module RIP matracies

Sensing matrix $ \Phi $must satisfy RIP i.e. Random Gaussian or Bernoulli matrixies satisfies A $ M = \mathcal{O}(K/log(N/K)) $ which is the number of measurements required for standard compressive sensing to recover with high probability.

sub modual

let $ [ N ] = \{ 1, \dots , N \} $be an index set $ [N] $ enumerates the columns of $ \Phi $ and $ x $. $ \Phi $ is an under determined systems with infinite solutions since $ M \ll N $. Why $ l_2 $ norm won't give sparse solutions, where asl $ l_1 $ norm will return a sparse solution.

sub modual 3

Before we get into RIP lets talk about RIC

Restricted Isometry Constant (RIC) is the smallest $ \delta_{|s} \geq 0 \ s.t. \ s \subseteq [N] $that satisfies the RIP condition introduced by Candes, Tao

sub modual RIP

Random Gaussian and Bernoulli satisfies RIP

RIP defined as

$ (1 - \delta_s) \| x \|_2 ^2 \leq \| \Phi x \|_2^2 \leq (1 + \delta_s) \| x \|_2 ^2 $

sub module

Let $ \Phi \in \mathbb{R}^{M \times N} $ satisfy RIP, Let $ [N] $ be an index set For $ s $ is a restriction on $ \mathbf{x} $ denoted by $ x_{|s} $ $ x \in \mathbb{R}^N $ to $ s $ k-sparse $ \mathbf{x} $ s.t. RIP is satisfied the $ s = supp(\mathbf{x}) $ i.e. $ s \subseteq [N] $and $ \Phi_{|s} \subseteq \Phi $ where the columns of $ \Phi_{|s} $ is indexed by $ i \in S $

In search for a unique solution we have the following $ l_0 = |supp(x)| $ optimization problem.

Theory

Verification of the Sensing matrix

Check if $ \Phi $ satisfies RIP Checking $ \Phi $ satisfies RIP is NP-complete in general so it's unreasonable to ask a computer to verify a matrix satisfies RIP. In order to get around this combinatorial hard problem, we need an understanding of what matrices satisfy RIP.

Random Sensing matrices: Gaussian, Bernoulli, Rademacher Deterministic Sensing Matrices: binary, bipolar, ternary, Vandermond Structural Sensing Matrices: Toeplitz, Circulant, Hadamard Optimized Sensing Matrices (Parkale, Nalbalwar, Sensing Matrices in Compressed Sensing)

Are some examples. Different sensing matrices are more suited for different problems, but in general, we want to use an alternative to Gaussian because it reduces the computational complexity.

Definition Mutual Coherence

Let $ \Phi \in R^{M \times N} $, the mutual coherence $ \mu_\Phi $ is defined by:</math>

$ \mu_{\Phi} = \underset{i \neq j} {\frac{| \langle a_i, a_j \rangle |}{ \| a_i \| \| a_j \|}} $[3]

Welch bound $ \mu_\Phi \geq \sqrt{\frac{n}{m(n-m)}} $> [3] $ \mu \geq \sqrt{\frac{N -M}{M(N-1)}} $> is the coherence between $ \Phi $ and $ \Psi $ We want a small $ \mu_{\Phi} $ because it will be close to the normal matrix, which satisfies RIP. Also, $ \mu_{\Phi} $ will be needed for the step size for the following IHT.

Need to make the connection of Coherence to RIP and RIC.

Algorithm IHT

The $ l_1 $ convex program mentioned in introduction has an equivalent nonconstraint optimization program.

$ \underset{y}{min} \| \mathbf{y} - \Phi \mathbf{x} \|_2^2 + \lambda \| \mathbf{y} \|_0 $ (cite IT for sparse approximations) ??? $ \hat{\mathbf{x}} = arg \underset{s}{min} \frac{1}{n} \| \mathbf{y} - \Phi \mathbf{x}\|_2^2 + \lambda \| \mathbf{x}\|_1 $ [3]. In statistics we call the $ l_1 $ regularization LASSO with $ \lambda $ as the regularization parameter. This is the closest convex relaxation to $ l_0 $ the first program menttioned in the introduction.[The Benefit of Group Sparsity]

$ z_v^{(n)} = \nabla f_v(x^{(n)}) = - \Phi_v^T( \mathbf{y} - \Phi \mathbf{x}) $ Then $ x^{n+1} = \mathcal{H}\left( \mathbf{x}^{(n)} - \tau \sum_{j \in N}^{N} z_v^{(n)}\right) $

sub modual

Define the threashholding operators as: $ \mathcal{H}_s[\mathbf{x}] = \underset{z \in \sum_s}{argmin} \| x - \Phi \mathbf{x}\|_2 $ selects the best-k term approximation for some k

Stopping criterion is $ \| y - \Phi \mathbf{x}^{(n)}\|_2 \leq \epsilon $ iff RIC $ \delta_{3s} < \frac{1}{\sqrt{32}} $[4]

- Input $ \Phi, \mathbf{y}, \mathbf{e} \ \mbox{with} \ \mathbf{y} = \mathbf{\Phi} \mathbf{x} | \mathbf{e} and \mathfrak{M} $

- output $ IHT(\mathbf{y}, \mathbf{\Phi}, \mathcal{S}) $

- Set $ x^{(0)} = \mathbf{0} $

- While Stopping criterion false do

- $ x^{(n+1)} \leftarrow \mathcal{H}_{|s} \left[ x^{(n)} + \Phi^* (\mathbf{y} - \mathbf{\Phi x}^{(n)}) \right] $

- $ n \leftarrow n + 1 $

- end while

- return: $ IHT(\mathbf{y}, \mathbf{\Phi}, \mathfrak{M}) \leftarrow \mathbf{x}^{(n)} $

$ \Phi^* $ is a Adjoint matrix i.e. the transpost of it's cofactor.

Numerical Example

We will do some hacking to make use it works. If $ \Phi $ is a gaussian random matrix then we know that it satisfies RIP with high probability and IHT will reconstruct the the true signal find $ x $ with $ l_1 $ minimization. (cite Ca, Ro, ta, Robust uncertainty exact signal) (cite Blu, Davies IHT for CS) Other words we don't really know in general if $ \Phi $ satisfies RIP in general unless we solve an NP-complete problem; however, we can cross our fingers that $ \Phi $ satisfies RIP with a high probability because it's Gaussian and not go through all the work for total verification of RIP. Donoho proves that nearly all matrices are sensing matrices.

Iterative Hard Thresholding IHT

Applications

Netflix problem

Conclusion

Referencse

- ↑ Cite error: Invalid

<ref>tag; no text was provided for refs named:0 - ↑ Cite error: Invalid

<ref>tag; no text was provided for refs named:4 - ↑ 3.0 3.1 3.2 Cite error: Invalid

<ref>tag; no text was provided for refs named:1 - ↑ Cite error: Invalid

<ref>tag; no text was provided for refs named:2 - ↑ D. L. Donoho, “Compressed sensing,” vol. 52, pp. 1289–1306, 2006, doi: 10.1109/tit.2006.871582.

- ↑ E. J. Candès and T. Tao, “Decoding by linear programming,” IEEE Trans. Inf. Theory, vol. 51, no. 12, Art. no. 12, 2005, doi: 10.1109/TIT.2005.858979.

- ↑ D. L. Donoho, “Compressed sensing,” vol. 52, pp. 1289–1306, 2006, doi: 10.1109/tit.2006.871582.

- ↑ T. Blumensath and M. E. Davies, “Iterative Hard Thresholding for Compressed Sensing,” May 2008.

- ↑ S. Foucart and H. Rauhut, A mathematical introduction to compressive sensing. New York [u.a.]: Birkhäuser, 2013.

- ↑ R. G. Baraniuk, “Compressive Sensing [Lecture Notes],” IEEE Signal Processing Magazine, vol. 24, no. 4, Art. no. 4, 2007, doi: 10.1109/MSP.2007.4286571.