Interior-point method for LP

Authors: Tomas Lopez Lauterio, Rohit Thakur and Sunil Shenoy (SysEn 6800 Fall 2020)

Steward: Dr. Fengqi You and Akshay Ajagekar

Introduction

Linear programming problems seeks to optimize linear functions given linear constraints. There are several applications of linear programming including inventory control, production scheduling, transportation optimization and efficient manufacturing processes. Simplex method has been a very popular method to solve these linear programming problems and has served these industries well for a long time. But over the past 40 years, there have been significant number of advances in different algorithms that can be used for solving these types of problems in more efficient ways, especially where the problems become very large scale in terms of variables and constraints.[1] [2] In early 1980s Karmarkar (1984) [3] published a paper introducing interior point methods to solve linear-programming problems. A simple way to look at differences between simplex method and interior point method is that a simplex method moves along the edges of a polytope towards a vertex having a lower value of the cost function, whereas an interior point method begins its iterations inside the polytope and moves towards the lowest cost vertex without regard for edges. This approach reduces the number of iterations needed to reach that vertex, thereby reducing computational time needed to solve the problem.

Lagrange Function

Before getting too deep into description of Interior point method, there are a few concepts that are helpful to understand. First key concept to understand is related to Lagrange function. Lagrange function incorporates the constraints into a modified objective function in such a way that a constrained minimizer $ (x^{*}) $ is connected to an unconstrained minimizer $ \left \{x^{*},\lambda ^{*} \right \} $ for the augmented objective function $ L\left ( x , \lambda \right ) $, where the augmentation is achieved with $ 'p' $ Lagrange multipliers.

To illustrate this point, if we consider a simple an optimization problem:

minimize $ f\left ( x \right ) $

subject to: $ A \cdot x = b $,

where A ε Rpxn is assumed to have a full row rank

Lagrange function can be laid out as:

$ L(x, \lambda ) = f(x) - \sum_{i=1}^{p}\lambda _{i}\cdot a_{i}(x) $

where, 'λ' introduced in this equation is called Lagrange Multiplier.

Newton's Method

Another key concept to understand is regarding solving linear and non-linear equations using Newton's methods.

Assume you have an unconstrained minimization problem in the form:

minimize g(x) , where g(x) is a real valued function with n variables.

A local minimum for this problem will satisfy the following system of equations:

$ \left [ \frac{\partial g(x)}{\partial x_{1}} ..... \frac{\partial g(x)}{\partial x_{n}}\right ]^{T} = \left [ 0 ... 0 \right ] $

The Newton's iteration looks like:

$ x^{k+1} = x^{k} - \left [ \nabla ^{2} g(x^{k}) \right ]^{-1}\cdot \nabla g(x^{k}) $

Theory and algorithm

We first start forming a primal-dual pair of linear programs and use the "Lagrangian function" and "Barrier function" methods to convert the constrained problems into unconstrained problems. These unconstrained problems are then solved using Newton's method as shown above.

Problem Formulation

Consider a combination of primal-dual problem below:

(Primal Problem formulation)

→ minimize $ c^{T}x $ Subject to: $ Ax = b $ and $ x \geq 0 $ ....................................................................................................(1)

(Dual Problem formulation)

→ maximize $ b^{T}y $ Subject to: $ A^{T}y + \lambda = c $ and </math> \lambda \geq 0 </math> .................................................(2)

'λ' vector introduced represents the slack variables.

Now we use the "Logarithmic Barrier" function and form 2 Lagrangian equations for primal and dual forms mentioned above:

Lagrange function for Primal : $ L_{p}(x,y) = c^{T}\cdot x + \mu \cdot \sum_{j=1}^{n}log(x_{j}) - y^{T}\cdot (Ax - b) $ ........................................(3)

Lagrange function for Dual : $ L_{d}(x,y,\lambda ) = b^{T}\cdot y + \mu \cdot \sum_{j=1}^{n}log(\lambda _{j}) - x^{T}\cdot (A^{T}y -\lambda - c) $ ..........................(4)

Taking the partial derivatives of Lp and Ld with respect to variables 'x', 'λ' , 'y', and forcing these terms to zero, we get the following equations:

$ Ax = b $ and $ x \geq 0 $ .................................................................................................................................................(5)

$ A^{T}y + \lambda = c $ and $ \lambda \geq 0 $ .........................................................................................................................................(6)

$ x_{j}\cdot \lambda _{j} = \mu $ for j= 1,2,.......n ......................................................................................................................................(7)

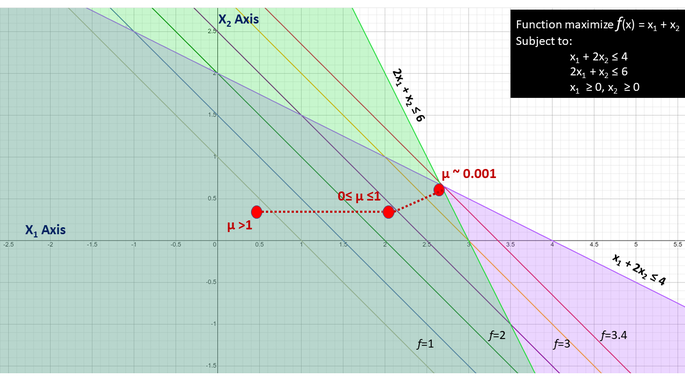

where, μ is strictly positive scaler parameter. For each μ > 0, the vectors in the set {x(μ), y(μ), λ(μ)} satisfying equations (5), (6) and (7), can we viewed as set of points in Rn, Rp, Rn such that when 'μ' varies, the corresponding points form a set of trajectories called "Central Path". The central path lies in the "Interior" of the feasible regions. There is a sample illustration of "Central Path" method in figure to right. We start with a positive value of 'μ' and as 'μ' goes to 0, we approach the optimal point

Let us define the following:

X = Diagonal ($ x_{1}^{0}, .... x_{n}^{0} $)

$ \lambda $ = Diagonal ($ \lambda _{1}^{0}, .... \lambda _{n}^{0} $ )

eT = (1 .....1) as vector of all 1's.

Using these newly defined terms, the equation (7) can be written as:

$ X\cdot \lambda \cdot e = \mu \cdot e $

Iterations using Newton's Method

Now we employ the Newton's iterative method to solve the following equations:

$ Ax - b = 0 $ ............................................................................................................................................................(8)

$ A^{T}y + \lambda = c $ .........................................................................................................................................................(9)

$ X\cdot \lambda \cdot e - \mu \cdot e = 0 $ ............................................................................................................................................ (10)

Suppose we start with definition of starting point that lies within feasible region as (x0, y0, λ 0) such that x0>0 and λ 0 >0. Also let us define 2 residual vectors for both the primal and dual equations:

$ \delta _{p} = b - A\cdot x^{0} $ .....................................................................................................................................................(11)

$ \delta _{d} = c - A^{0}\cdot y^{0} - \lambda ^{0} $ ..........................................................................................................................................(12)

Applying Newton's Method to solve equations (8) - (12) we get:

$ \begin{bmatrix} A & 0 & 0\\ 0 & A^{T} & 1\\ \lambda & 0 & X \end{bmatrix} \cdot \begin{bmatrix} \delta _{x}\\ \delta _{y}\\ \delta _{\lambda } \end{bmatrix} = \begin{bmatrix} \delta _{p}\\ \delta _{d}\\ \mu \cdot e - X\cdot \lambda \cdot e \end{bmatrix} $

So a single iteration of Newton's method involves the following equations. For each iteration, we solve for the next value of xk+1, yk+1 and λk+1:

$ (A\lambda ^{-1}XA^{T})\delta _{y} = b- \mu A\lambda^{-1} + A\lambda ^{-1}X\delta _{d} $ .....................................................................................................(13)

$ \delta _{\lambda} = \delta _{d}\cdot A^{T}\delta _{y} $ ......................................................................................................................................................(14)

$ \delta _{x} = \lambda ^{-1}\left [ \mu \cdot e - X\lambda e -\lambda \delta _{z}\right ] $ ............................................................................................................................(15)

$ \alpha _{p} = min\left \{ \frac{-x_{j}}{\delta _{x_{j}}} \right \} $ for $ \delta x_{j} < 0 $ ........................................................................................................................ (16)

$ \alpha _{d} = min\left \{ \frac{-\lambda_{j}}{\delta _{\lambda_{j}}} \right \} $ for $ \delta \lambda_{j} < 0 $ ........................................................................................................................ (17)

The value of the the following variables for next iteration (+1) is determined by:

$ x^{k+1} = x^{k} + \alpha _{p}\cdot \delta _{x} $

$ y^{k+1} = y^{k} + \alpha _{d}\cdot \delta _{y} $

$ \lambda^{k+1} = \lambda^{k} + \alpha _{d}\cdot \delta _{\lambda} $

The quantities αp and αd are positive with 0 ≤ αp, αd ≤ 1.

After each iteration of Newton's method, we assess the duality gap that is given by the expression below and compare it against a small value ε

$ \frac{c^{T}x^{k}-b^{T}y^{k}}{1+\left | b^{T}y^{k} \right |} \leq \varepsilon $

The value of ε can be chosen to be something small 10-6, which essentially is the permissible duality gap for the problem.

Numerical Example

Maximize 3X1 + 3X2

such that X1 + X2 ≤ 4, X1 ≥ 0, X2 ≥ 0

Barrier form of the above primal problem is as written below:

P(X,μ) = 3X1 + 3X2 + μ.log(4-X1 - X2) + μ.log(X1) + μ.log(X2)

The Barrier function is always concave, since the problem is a maximization problem, there will be one and only one solution. In order to find the maximum point on the concave function we take a derivate and set it to zero.

Taking partial derivative and setting to 0, we get the below equations

$ \frac{\partial P(X,\mu)}{\partial X_{1}} = 3 - \frac{\mu}{(4-X_{1}-X_{2})} + \frac{\mu}{X_{1}} = 0 $ .......... (1)

$ \frac{\partial P(X,\mu)}{\partial X_{2}} = 3 - \frac{\mu}{(4-X_{1}-X_{2})} + \frac{\mu}{X_{2}} = 0 $ .......... (2)

From the equation (1) and (2) we can derive that X1 = X2 .......... (3)

Plugging (3) in (1) we get

$ 3 - \frac{\mu}{(4-2X_{1})} + \frac{\mu}{X_{1}} = 0 $

The above equation can be converted into a quadratic equation as below:

$ 6X_{1}^2 - 3X_{1}(4-\mu)-4\mu = 0 $

The solution to the above quadratic equation can be written as below:

$ X_{1} = \frac{3(4-\mu)\pm(\sqrt{9(4-\mu)^2 + 96\mu} }{12} = X_{2} $

Taking only take the positive value of X1 and X2 from the above equation as X1≥0 and X2≥0 we can solve X1 and X2 for different values of μ. The outcome of such iterations is listed in the table below.

| μ | X1 | X2 | P(X, μ) | f(x) |

|---|---|---|---|---|

| 0 | 2 | 2 | 12 | 12 |

| 0.01 | 1.998 | 1.998 | 11.947 | 11.990 |

| 0.1 | 1.984 | 1.984 | 11.697 | 11.902 |

| 1 | 1.859 | 1.859 | 11.128 | 11.152 |

| 10 | 1.486 | 1.486 | 17.114 | 8.916 |

| 100 | 1.351 | 1.351 | 94.357 | 8.105 |

| 1000 | 1.335 | 1.335 | 871.052 | 8.011 |

From the above table it can be seen that:

- as μ gets close to zero, the Barrier Function becomes tight and close to the original function.

- at μ=0 the optimal solution is achieved.

Summary:

Maximum Value of Objective function =12

Optimal points X1 = 2 and X2 = 2

Applications

Primal-Dual interior-point (PDIP) methods are commonly used in optimal power flow (OPF), in this case what is being looked is to maximize user utility and minimize operational cost satisfying operational and physical constraints. The solution to the OPF needs to be available to grid operators in few minutes or seconds due changes fluctuations in loads or power generation. Newton-based primal-dual interior point can achieve fast convergence in this OPF optimization problem1

Another application of the PDIP is for the minimization of losses and cost in the generation and transmission in hydroelectric power systems2

PDIP are commonly used in imaging processing. One application is for image deblurring, in this case the constrained deblurring problem is formulated as primal-dual. The constrained primal-dual is solved using a semi-smooth Newton’s method3

PDIP can be utilized to obtain a general formula for a shape derivative of the potential energy, describing the energy release rate for curvilinear cracks. Problems on cracks and their evolution have important application in engineering and mechanical sciences4.

Conclusion

The primal-dual interior point method is a good alternative to the simplex methods for solving linear programming problems. The primal dual method shows superior performance and convergence on many large complex problems. simplex codes are faster on small to medium problems, interior point primal-dual are much faster on large problems.

References

- ↑ "Practical Optimization - Algorithms and Engineering Applications" by Andreas Antoniou and Wu-Sheng Lu, ISBN-10: 0-387-71106-6

- ↑ "Linear Programming - Foundations and Extensions - 3rd edition" by Robert J Vanderbei, ISBN-113: 978-0-387-74387-5.

- ↑ N Karmarkar, "A new Polynomial - Time algorithm for linear programming", Combinatorica, VOl. 4, No. 8, 1984, pp. 373-395.

- "Practical Optimization - Algorithms and Engineering Applications" by Andreas Antoniou and Wu-Sheng Lu, ISBN-10: 0-387-71106-6

- "Linear Programming - Foundations and Extensions - 3rd edition" by Robert J Vanderbei, ISBN-113: 978-0-387-74387-5

- "Computational Experience with Primal-Dual Interior Point Method for Linear Programming" by Irvin Lustig, Roy Marsten, David Shanno

- 1) A. Minot, Y. M. Lu and N. Li, "A parallel primal-dual interior-point method for DC optimal power flow," 2016 Power Systems Computation Conference (PSCC), Genoa, 2016, pp. 1-7, doi: 10.1109/PSCC.2016.7540826.

- 2) L. M. Ramos Carvalho and A. R. Leite Oliveira, "Primal-Dual Interior Point Method Applied to the Short Term Hydroelectric Scheduling Including a Perturbing Parameter," in IEEE Latin America Transactions, vol. 7, no. 5, pp. 533-538, Sept. 2009, doi: 10.1109/TLA.2009.5361190.

- 3) D. Krishnan, P. Lin and A. M. Yip, "A Primal-Dual Active-Set Method for Non-Negativity Constrained Total Variation Deblurring Problems," in IEEE Transactions on Image Processing, vol. 16, no. 11, pp. 2766-2777, Nov. 2007, doi: 10.1109/TIP.2007.908079.

- 4) V. A. Kovtunenko, Primal–dual methods of shape sensitivity analysis for curvilinear cracks with nonpenetration, IMA Journal of Applied Mathematics, Volume 71, Issue 5, October 2006, Pages 635–657,