Adam

Author: Akash Ajagekar (SYSEN 6800 Fall 2021)

Introduction

Adam optimizer is the extended version of stochastic gradient descent which has broader scope in future for deep learning applications in computer vision and natural processing. It is an optimization algorithm that can be an alternative for stochastic gradient descent process. The name is derived from adaptive moment estimation. Adam is proposed as the most efficient stochastic optimization which only requires first order gradients where memory requirement too less.[1] Before Adam many adaptive optimization techniques were introduced such as AdaGrad, RMSP which have good performance over SGD but in some cases have some disadvantages such as generalizing performance which is worse than that of the SGD in some cases. So Adam was introduced which is better in terms of generalizing performance.

Theory

Adam is a combination of two gradient descent methods which are explained below,

Momentum:

This is a optimization algorithm which takes into consideration the 'exponentially weighted average' and accelerates the gradient descent. It is an extension of gradient descent optimization algorithm.

The Momentum algorithm is solved in two parts. First is to calculate the change to position and second one is to update the old position with the updated position. The change in position is given by,

update = α * m_t

The new position or weights at time t is given by,

w_t+1 = w_t - update

Here in the above equation α(Step Size) is the Hyperparameter which controls the movement in the search space which is also called as learning rate. And, f'(x) is the derivative function or aggregate of gradients at time t.

where,

m_t = β * m_t - 1 + (1 - β) * (∂L / ∂w_t)

In the above equations m_t and m_t-1 are aggregate of gradients at time t and aggregate of gradient at time t-1.

According to [2] Momentum has the effect of dampening down the change in the gradient and, in turn, the step size with each new point in the search space.

Root Mean Square Propagation (RMSP):

RMSP is an adaptive optimization algorithm which is a improved version of AdaGrad . In AdaGrad we take the cumulative summation of squared gradients but, in RMSP we take the 'exponential average'.

It s given by,

w_t+1 = w_t - (αt / (vt + e) ^ 1/2) * (∂L / ∂w_t)

where,

vt = βvt - 1 + (1 - β) * (∂L / ∂w_t) ^ 2

Here,

Aggregate of gradient at t = m_t

Aggregate of gradient at t - 1 = m_t - 1

Weights at time t = w_t

Weights at time t + 1 = w_t + 1

αt = learning rate(Hyperparameter)

∂L = derivative of loss function

∂w_t = derivative of weights at t

β = Average parameter

e = constant

But as we know these two optimizers explained below have some problems such as generalizing performance. The article [3] tells us that Adam takes over the attributes of the above two optimizers and build upon them to give more optimized gradient descent.

Algorithm

Taking the equations used in the above two optimizers

m_t = β1 * m_t - 1 + (1 - β1) * (∂L / ∂w_t) and vt = β2vt - 1 + (1 - β2) * (∂L / ∂w_t) ^ 2

Initially both mt and vt are set to 0. Both tend to be more biased towards ) as β1 and β2 are equal to 1. By computing bias corrected m_t and vt, this problem is corrected by the Adam optimizer. The equations are as follows,

m'_t = m_t / (1 - β1 ^ t)

v't = vt / (1 - β2 ^ t)

Now as we are getting used to gradient descent after every iteration and hence it remains controlled and unbiased. Now substitute the new parameters in place of the old ones. We get,

w_t+1 = w_t - m'_t ( α / v't^1/2 + e)

Numerical Example

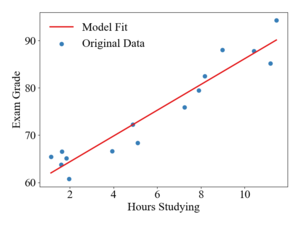

To illustrate how updates occur in the Adam algorithm, consider a linear, least-squares regression problem formulation. The table below shows a sample data-set of student exam grades and the number of hours spent studying for the exam. The goal of this example will be to generate a linear model to predict exam grades as a function of time spent studying.

| Hours Studying | 9.0 | 4.9 | 1.6 | 1.9 | 7.9 | 2.0 | 11.5 | 3.9 | 1.1 | 1.6 | 5.1 | 8.2 | 7.3 | 10.4 | 11.2 |

| Exam Grad | 88.0 | 72.3 | 66.5 | 65.1 | 79.5 | 60.8 | 94.3, | 66.7 | 65.4 | 63.8 | 68.4 | 82.5 | 75.9 | 87.8 | 85.2 |

The hypothesized model function will be

The cost function is defined as

Where the coefficient is used only to make the derivatives cleaner. The optimization problem can then be formulated as trying to find the values of that minimize the squared residuals of and .

For simplicity, parameters will be updated after every data point i.e. a batch size of 1. For a single data point the derivatives of the cost function with respect to and are

The initial values of will be set to [50, 1] and The learning rate, , is set to 0.1 and the suggested parameters for , , and are used. With the first data sample of , the computed gradients are

With and being initialized to zero, the calculations of and are

The bias-corrected terms are computed as

Finally, the parameter update is

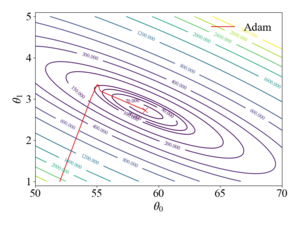

This procedure is repeated until the parameters have converged, giving values of . The figures to the right show the trajectory of the Adam algorithm over a contour plot of the objective function and the resulting model fit. It should be noted that the stochastic gradient descent algorithm with a learning rate of 0.1 diverges and with a rate of 0.01, SGD oscillates around the global minimum due to the large magnitudes of the gradient in the direction.

Applications

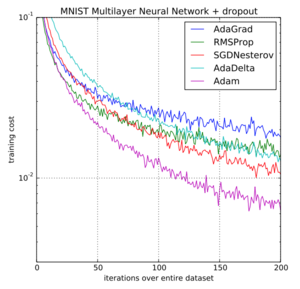

The Adam optimization algorithm has been widely used in machine learning applications to train model parameters. When used with backpropagation, the Adam algorithm has been shown to be a very robust and efficient method for training artificial neural networks and is capable of working well with a variety of structures and applications. In their original paper, the authors present three different training examples, logistic regression, multi-layer neural networks for classification of MNIST images, and a convolutional neural network (CNN). The training results from the original Adam paper showing the objective function cost vs. the iteration over the entire data set for the multi-layer neural network is shown to the right.

Variants of Adam

AdaMax

AdaMax[4] is a variant of the Adam algorithm proposed in the original Adam paper that uses an exponentially weighted infinity norm instead of the second-order moment estimate. The weighted infinity norm updated , is computed as

The parameter update then becomes

Nadam

The Nadam algorithm[5] was proposed in 2016 and incorporates the Nesterov Accelerate Gradient (NAG)[6], a popular momentum like SGD variation, into the first-order moment term.

Conclusion

Adam is a variant of the gradient descent algorithm that has been widely adopted in the machine learning community. Adam can be seen as the combination of two other variants of gradient descent, SGD with momentum and RMSProp. Adam uses estimations of the first and second-order moments of the gradient to adapt the parameter update. These moment estimations are computed via moving averages, and , of the gradient and the squared gradient respectfully. In a variety of neural network training applications, Adam has shown increased convergence and robustness over other gradient descent algorithms and is often recommended as the default optimizer for training.[7]

References

- ↑ https://arxiv.org/pdf/1412.6980.pdf ADAM: A METHOD FOR STOCHASTIC OPTIMIZATION

- ↑ Deep Learning (Adaptive Computation and Machine Learning series)

- ↑ https://www.geeksforgeeks.org/intuition-of-adam-optimizer/ Intuition of Adam Optimizer

- ↑ 4.0 4.1 Cite error: Invalid

<ref>tag; no text was provided for refs namedadam - ↑ Dozat, Timothy. Incorporating Nesterov Momentum into Adam. ICLR Workshop, no. 1, 2016, pp. 2013–16.

- ↑ Nesterov, Yuri. A method of solving a convex programming problem with convergence rate O(1/k^2). In Soviet Mathematics Doklady, 1983, pp. 372-376.

- ↑ "Neural Networks Part 3: Learning and Evaluation," CS231n: Convolutional Neural Networks for Visual Recognition, Stanford Unversity, 2020

![{\displaystyle (x,y)=[8.98,88.01]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/9bbaa7917b46e820c748c61ff7146a37426acb34)

![{\displaystyle [58.98,2.72]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/777165c49205a0dd06fd0af07d113861fbcd7b48)