Markov decision process: Difference between revisions

| Line 2: | Line 2: | ||

= Introduction = | = Introduction = | ||

A Markov Decision Process (MDP) is a decision making method that takes into account information from the environment, actions performed by the agent, and rewards in order to decide the optimal next action. | A Markov Decision Process (MDP) is a stochastic sequential decision making method. Sequential decision making is applicable any time there is a dynamic system that is controlled by a decision maker where decisions are made sequentially over time. MDPs can be used to determine what action the decision maker should make given the current state of the system and its environment. This decision making process takes into account information from the environment, actions performed by the agent, and rewards in order to decide the optimal next action. MDPs can be characterized as both finite or infinite and continuous or discrete depending on the set of actions and states available and the decision making frequency. This article will focus on discreet MDPs with finite states and finite actions for the sake of simplified calculations and numerical examples. The name Markov refers to the Russian mathematician Andrey Markov, since the Markov Decision Process is based on the Markov Property. MDPs are often used as control schemes in machine learning applications. The Markov decision process is used as a method for decision making in the reinforcement learning category. MDPs are the basis by which the machine makes decisions and "learns" how to behave in order to achieve its goal. | ||

= Theory and Methodology = | = Theory and Methodology = | ||

Revision as of 15:47, 12 December 2020

Author: Eric Berg (eb645), Fall 2020

Introduction

A Markov Decision Process (MDP) is a stochastic sequential decision making method. Sequential decision making is applicable any time there is a dynamic system that is controlled by a decision maker where decisions are made sequentially over time. MDPs can be used to determine what action the decision maker should make given the current state of the system and its environment. This decision making process takes into account information from the environment, actions performed by the agent, and rewards in order to decide the optimal next action. MDPs can be characterized as both finite or infinite and continuous or discrete depending on the set of actions and states available and the decision making frequency. This article will focus on discreet MDPs with finite states and finite actions for the sake of simplified calculations and numerical examples. The name Markov refers to the Russian mathematician Andrey Markov, since the Markov Decision Process is based on the Markov Property. MDPs are often used as control schemes in machine learning applications. The Markov decision process is used as a method for decision making in the reinforcement learning category. MDPs are the basis by which the machine makes decisions and "learns" how to behave in order to achieve its goal.

Theory and Methodology

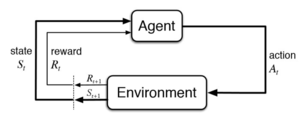

A Markov Decision process makes decisions using information about the system's current state, the actions being performed by the agent and the rewards earned based on states and actions.

A Markov decision process is made up of multiple fundamental elements: the agent, states, a model, actions, rewards, and a policy. The agent is the object or system being controlled that has to make decisions and perform actions. The agent lives in an environment that can be described using states, which contain information about the agent and the environment. The model determines the rules of the world in which the agent lives, in other words, how certain states and actions lead to other states. The agent can perform a fixed set of actions in any given state. The agent receives rewards based on its current state. A policy is a function that determines the agent's next action based on its current state.

MDP Framework:

- : States ()

- : Actions ()

- : Model determining transition probabilities

- : Reward

In order to understand how the Markov Decision Process works, first the Markov Property must be defined. The Markov Property states that the future is independent of the past given the present. In other words, only the present in needed to determine the future, since the present contains all necessary information from the past. The Markov Property can be described in mathematical terms below:

The above notation conveys that the probability of the next state given the current state is equal to the probability of the next state given all previous states. The Markov Property is relevant to the Markov Decision Process because only the current state is inputted into the policy function to determine the next action, the previous states and actions are not needed.

The Policy and Value Function

The policy, , is a function that maps actions to states. The policy determines which is the optimal action given the current state to achieve maximize reward.

There are various methods that can be used for finding the best policy. Each method tries to maximize rewards in some way, but differs in which accumulation of rewards should be maximized. The first method is to choose the action that maximizes the expected reward given the current state. This is the myopic method, which weighs each time-step decision equally. Next is the finite-horizon method, which tries to maximize the accumulated reward over a fixed number of time steps. But because many applications may have infinite horizons, meaning the agent will always have to make decisions and continuously try to maximize its reward, another method is commonly used, known as the infinite-horizon method. In the infinite-horizon method, the goal is to maximize the expected sum of rewards over all steps in the future. The problem becomes when performing an infinite sum of rewards that are all weighed equally, the results may not converge and the policy algorithm may get stuck in a loop. In order to avoid this, and to be able prioritize short-term or long term-rewards, a discount factor, , is added. If is closer to 0, the policy will choose actions that prioritize more immediate rewards, and is closer to 1, long-term rewards are prioritized.

- Myopic: Maximize , maximize expected reward for each state

- Finite-horizon: Maximize

- Discounted Infinite-horizon: Maximize

Most commonly, the discounted infinite horizon method is used to determine the best policy. The value function, , is the sum of discounted rewards.

Using the Bellman Equation, the value function can be decomposed into two parts, the immediate reward of the current state, and the discounted reward of the next state.

The value function can solved iteratively using iterative methods such as dynamic programming, Monte-Carlo evaluations, or temporal-difference learning.

The optimal policy is one that chooses the action with the largest value given the current state:

The policy is a function of the current state, meaning at each time step a new policy is calculated considering the present information. The optimal value function can be solved using methods such as value iteration, policy iteration, or linear programming.

The Algorithm

At each step, given state

- Update Value Function

- Update Policy and perform action

Numerical Example

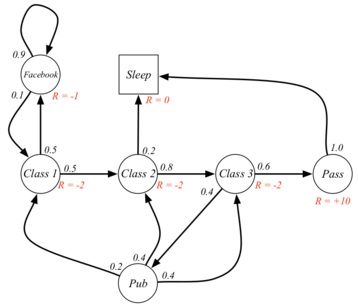

As an example, the Markov decision process can be applied to a college student, depicted to the right. In this case, the agent would be the student. The states would be the circles and squares in the diagram, and the arrows would be the actions. The actions between class states would be "study". In the state that the student is in Class 2, the allowable actions are to study or sleep. The model in this case would assign probabilities to each state given the previous state and action. These probabilities are written next to the arrows. Finally the rewards associated with each state are written in red.

Assume the first state is Class 1, =1.

First, the value functions must be calculated for each state.

Then, in state 1 (Class 1), the optimal policy is that the action chosen should result in the state that generates the highest value function.

= study and go to Class 2

Therefore, the optimal policy in state Class 1 is to go to Class 2. Now, the optimal policy action can be decided in each state using the same process.

Applications

Markov decision Processes have been used widely within reinforcement learning to teach robots or other computer-based systems how to do something they were previously were unable to do. For example, Markov decision processes have been used to teach a computer how to play computer games like Pong, Pacman, or AlphaGo. MDPs have been used to teach a simulated robot how to walk and run. MDPs are often applied fields such as robotics, automated systems, manufacturing, and economics and stock trading.

Conclusion

A Markov Decision Process is a stochastic, sequential decision-making method based on the Markov Property. This process is fundamental in reinforcement learning applications and a core method for developing artificially intelligent systems. MDPs have been applied to various industries such as controlling robots and other automated systems, and even fields like economics.

References

- Abbeel, P. (n.d.). Markov Decision Processes and Exact Solution Methods: 34.

- Ashraf, M. (2018, April 11). Reinforcement Learning Demystified: Markov Decision Processes (Part 1). Medium. https://towardsdatascience.com/reinforcement-learning-demystified-markov-decision-processes-part-1-bf00dda41690

- Bertsekas, D. P. (n.d.). Dynamic Programming and Optimal Control 3rd Edition, Volume II. 233.

- Littman, M. L. (2001). Markov Decision Processes. In N. J. Smelser & P. B. Baltes (Eds.), International Encyclopedia of the Social & Behavioral Sciences (pp. 9240–9242). Pergamon. https://doi.org/10.1016/B0-08-043076-7/00614-8

- Platt, R. (n.d.). Markov Decision Processes. 66.

- Roberts, J. (n.d.). Markov Decision Processes. 24.

- Silver, D. (n.d.). Lecture 2: Markov Decision Processes. Markov Processes, 57.

![{\textstyle P[S_{t+1}|S_{t}]=P[S_{t+1}|S_{1},S_{2},S_{3}...S_{t}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/75ef438d698845bb28d52f2e38468979d654bbfc)

![{\displaystyle E[r_{t}|\Pi ,s_{t}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/b0e60055aa79932986f8173be33f717efdddd403)

![{\displaystyle E[\textstyle \sum _{t=0}^{k}\displaystyle r_{t}|\Pi ,s_{t}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/0a9962569af3b42f6649052cad8b095c044405cc)

![{\displaystyle E[\textstyle \sum _{t=0}^{\infty }\displaystyle \gamma ^{t}r_{t}|\Pi ,s_{t}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/c33036669c07e9152791368d2286caaeb734c293)

![{\displaystyle \gamma \epsilon [0,1]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/5d5a8bd3c5b3887060735b066e88e596b00c0291)

![{\displaystyle V(s)=E[\sum _{t=0}^{\infty }\gamma ^{t}r_{t}|s_{t}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/1712068d3d05cc2113753d97f5f87cf28083030b)

![{\displaystyle V(s)=E[r_{t+1}+\gamma v(s_{t+1})|s_{t}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/00fb95f008cab27c4d48ae69d561709678020c45)

![{\displaystyle \Pi (s)=max_{a}[R(s,a)+\gamma \sum _{s'\epsilon S}P_{ss'}^{a}V(s')]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/6e61eb46812fc2e463793fe6c785eddb6d21b7a2)

![{\displaystyle \Pi (Class1)=max_{a}[-2+2.7,-1-2.03]=max_{a}[0.7,-3.03]=0.7}](https://wikimedia.org/api/rest_v1/media/math/render/svg/e6d29eaaa5f7e942002af85e28d7299c74c659de)