Optimization with absolute values: Difference between revisions

(Revamped Method section) |

|||

| Line 11: | Line 11: | ||

An absolute value of a real number can be described as its distance away from zero, or the non-negative magnitude of the number. Thus, | An absolute value of a real number can be described as its distance away from zero, or the non-negative magnitude of the number. Thus, | ||

< | <nowiki>{\displaystyle |x|={\begin{cases}-x,&{\text{if }}x<0\\x,&{\text{if }}x\geq 0\end{cases}}}</nowiki> | ||

Absolute values can exist in optimization problems in two primary instances: in constraints and in the objective function. | Absolute values can exist in linear optimization problems in two primary instances: in constraints and in the objective function. | ||

=== Absolute Values in Constraints === | === Absolute Values in Constraints === | ||

Within | Within constraints, absolute value relations can be transformed into one of the following forms: | ||

{\displaystyle |X| = 0} | |||

{\displaystyle |X| \le C} | |||

{\displaystyle |X| \ge C} | |||

Where {\textstyle X} is a linear combination ({\textstyle ax_1 + bx_2 + ...} where {\textstyle a, b} are constants) and {\textstyle C} is a constant {\textstyle > 0}. | |||

==== {\displaystyle |X| = 0} ==== | |||

In this form, the only possible solution is if | |||

{\displaystyle X = 0} | |||

simplifying the constraint. Note that this solution also occurs if the constraint is in the form | |||

{\displaystyle |X| \le 0} | |||

due to the same conclusion that the only possible solution is {\textstyle X = 0}. | |||

==== {\displaystyle |X| \le C} ==== | |||

The second form a linear constraint can exist in is | |||

{\displaystyle |X|\leq C} | |||

In this case, an equivalent feasible solution can be described by splitting the constraint into two: | |||

As seen visually, the feasible region has a gap and thus non-convex. The expressions also make it impossible for both to simultaneously hold true. This means that it is not possible to transform constraints in this form to linear equations. An approach to reach a solution for this particular case exists in the form of | {\displaystyle X\leq C} | ||

{\displaystyle -X\leq C} | |||

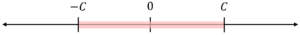

The solution can be understood visually since | |||

{\textstyle X} | |||

must lie between | |||

{\textstyle -C} | |||

and | |||

{\textstyle C} | |||

, as shown below:[[File:Number Line X Less Than C.png|none|thumb]] | |||

==== {\displaystyle |X| \ge C} ==== | |||

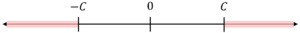

Visually, the solution space for the last form is the complement of the second solution above, resulting in the following representation:[[File:Number Line for X Greater Than C.png|none|thumb]]In expression form, the solutions can be written as: | |||

{\displaystyle X\geq C} | |||

{\displaystyle -X\geq C} | |||

As seen visually, the feasible region has a gap and thus non-convex. The expressions also make it impossible for both to simultaneously hold true. This means that it is not possible to transform constraints in this form to linear equations. | |||

An approach to reach a solution for this particular case exists in the form of Mixed-Integer Linear Programming, where only one of the equations above is “active”. | |||

The inequality can be reformulated into the following: | |||

{\displaystyle X + N*Y \ge C} | |||

{\displaystyle -X + N*(1-Y) \ge C} | |||

{\displaystyle Y = 0, 1} | |||

With this new set of constraints, a large constant {\textstyle N} is introduced, along with a binary variable {\textstyle Y}. So long as {\textstyle N} is sufficiently larger than the upper bound of {\textstyle X + C}, the large constant multiplied with the binary variable ensures that one of the constraints must be satisfied. For instance, if {\textstyle Y = 0}, the new constraints will resolve to: | |||

{\displaystyle X \ge C} | |||

{\displaystyle -X + N \ge C} | |||

Since {\textstyle N} is sufficiently large, the latter constraint will always be satisfied, leaving only one relation active: {\textstyle X \ge C}. Functionally, this allows for the XOR logical operation of {\textstyle X \geq C} and {\textstyle -X \geq C}. | |||

=== Absolute Values in Objective Functions === | === Absolute Values in Objective Functions === | ||

In objective functions, to leverage transformations of absolute functions, | In objective functions, to leverage transformations of absolute functions, all constraints must be linear. | ||

Similar to the case of absolute values in constraints, there are different approaches to the reformation of the objective function, depending on the satisfaction of sign constraints. The satisfaction of sign constraints is when the coefficient signs of the absolute terms must all be either: | |||

* Positive for a minimization problem | |||

* Negative for a maximization problem | |||

==== Sign Constraints are Satisfied ==== | |||

At a high level, the transformation works similarly to the second case of absolute value in constraints – aiming to bound the solution space for the absolute value term with a new variable, $\textstyle Z$. | |||

If {\textstyle |X|} is the absolute value term in our objective function, two additional constraints are added to the linear program: | |||

{\displaystyle X\leq Z} | |||

{\displaystyle -X\leq Z} | |||

The {\textstyle |X|} term in the objective function is then replaced by {\textstyle Z}, relaxing the original function into a collection of linear constraints. | |||

==== Sign Constraints are Not Satisfied ==== | |||

In order to transform problems where the coefficient signs of the absolute terms do not fulfill the conditions above, a similar conclusion is reached to that of the last case for absolute values in constraints – the use of integer variables is needed to reach an LP format. | |||

The following constraints need to be added to the problem: | |||

{\displaystyle X + N*Y \ge Z} | |||

{\displaystyle -X + N*(1-Y) \ge Z} | |||

{\displaystyle X \le Z} | |||

{\displaystyle -X \le Z} | |||

{\displaystyle Y = 0, 1} | |||

Again, {\textstyle N} is a large constant, {\textstyle Z} is a replacement variable for {\textstyle |X|} in the objective function, and {\textstyle Y} is a binary variable. The first two constraints ensure that one and only one constraint is active while the other will be automatically satisfied, following the same logic as above. The third and fourth constraints ensure that {\textstyle Z} must be equal to {\textstyle |X|} and has either a positive or negative value. For instance, for the case of {\textstyle Y = 0}, the new constraints will resolve to: | |||

{\displaystyle X \ge Z} | |||

{\displaystyle -X + N \ge Z} | |||

{\displaystyle X \le Z} | |||

{\displaystyle -X \le Z} | |||

As {\textstyle N} is sufficiently large ({\textstyle N} must be at least {\textstyle 2|X|} for this approach), the second constraint must be satisfied. Since {\textstyle Z} is non-negative, the fourth constraint must also be satistfied. The remaining constraints, {\textstyle X \ge Z} and {\textstyle X \le Z} can only be satisfied when {\textstyle Z = X} and is of non-negative signage. Together, these constraints will allow for the selection of the largest {\textstyle |X|} for maximization problems (or smallest for minimization problems). | |||

==Numerical Example== | ==Numerical Example== | ||

Revision as of 22:25, 9 December 2020

Authors: Matthew Chan (mdc297), Yilian Yin (yy896), Brian Amado (ba392), Peter (pmw99), Dewei Xiao (dx58) - SYSEN 5800 Fall 2020

Steward: Fengqi You

Introduction

Absolute values can make it relatively difficult to determine the optimal solution when handled without first converting to standard form. This conversion of the objective function is a good first step in solving optimization problems with absolute values. As a result, one can go on to solve the problem using linear programing techniques.

Method

Defining Absolute Values

An absolute value of a real number can be described as its distance away from zero, or the non-negative magnitude of the number. Thus,

{\displaystyle |x|={\begin{cases}-x,&{\text{if }}x<0\\x,&{\text{if }}x\geq 0\end{cases}}}

Absolute values can exist in linear optimization problems in two primary instances: in constraints and in the objective function.

Absolute Values in Constraints

Within constraints, absolute value relations can be transformed into one of the following forms:

{\displaystyle |X| = 0}

{\displaystyle |X| \le C}

{\displaystyle |X| \ge C}

Where {\textstyle X} is a linear combination ({\textstyle ax_1 + bx_2 + ...} where {\textstyle a, b} are constants) and {\textstyle C} is a constant {\textstyle > 0}.

{\displaystyle |X| = 0}

In this form, the only possible solution is if

{\displaystyle X = 0}

simplifying the constraint. Note that this solution also occurs if the constraint is in the form

{\displaystyle |X| \le 0}

due to the same conclusion that the only possible solution is {\textstyle X = 0}.

{\displaystyle |X| \le C}

The second form a linear constraint can exist in is

{\displaystyle |X|\leq C}

In this case, an equivalent feasible solution can be described by splitting the constraint into two:

{\displaystyle X\leq C}

{\displaystyle -X\leq C}

The solution can be understood visually since

{\textstyle X}

must lie between

{\textstyle -C}

and

{\textstyle C}

, as shown below:

{\displaystyle |X| \ge C}

Visually, the solution space for the last form is the complement of the second solution above, resulting in the following representation:

In expression form, the solutions can be written as:

{\displaystyle X\geq C}

{\displaystyle -X\geq C}

As seen visually, the feasible region has a gap and thus non-convex. The expressions also make it impossible for both to simultaneously hold true. This means that it is not possible to transform constraints in this form to linear equations.

An approach to reach a solution for this particular case exists in the form of Mixed-Integer Linear Programming, where only one of the equations above is “active”.

The inequality can be reformulated into the following:

{\displaystyle X + N*Y \ge C}

{\displaystyle -X + N*(1-Y) \ge C}

{\displaystyle Y = 0, 1}

With this new set of constraints, a large constant {\textstyle N} is introduced, along with a binary variable {\textstyle Y}. So long as {\textstyle N} is sufficiently larger than the upper bound of {\textstyle X + C}, the large constant multiplied with the binary variable ensures that one of the constraints must be satisfied. For instance, if {\textstyle Y = 0}, the new constraints will resolve to:

{\displaystyle X \ge C}

{\displaystyle -X + N \ge C}

Since {\textstyle N} is sufficiently large, the latter constraint will always be satisfied, leaving only one relation active: {\textstyle X \ge C}. Functionally, this allows for the XOR logical operation of {\textstyle X \geq C} and {\textstyle -X \geq C}.

Absolute Values in Objective Functions

In objective functions, to leverage transformations of absolute functions, all constraints must be linear.

Similar to the case of absolute values in constraints, there are different approaches to the reformation of the objective function, depending on the satisfaction of sign constraints. The satisfaction of sign constraints is when the coefficient signs of the absolute terms must all be either:

- Positive for a minimization problem

- Negative for a maximization problem

Sign Constraints are Satisfied

At a high level, the transformation works similarly to the second case of absolute value in constraints – aiming to bound the solution space for the absolute value term with a new variable, $\textstyle Z$.

If {\textstyle |X|} is the absolute value term in our objective function, two additional constraints are added to the linear program:

{\displaystyle X\leq Z}

{\displaystyle -X\leq Z}

The {\textstyle |X|} term in the objective function is then replaced by {\textstyle Z}, relaxing the original function into a collection of linear constraints.

Sign Constraints are Not Satisfied

In order to transform problems where the coefficient signs of the absolute terms do not fulfill the conditions above, a similar conclusion is reached to that of the last case for absolute values in constraints – the use of integer variables is needed to reach an LP format.

The following constraints need to be added to the problem:

{\displaystyle X + N*Y \ge Z}

{\displaystyle -X + N*(1-Y) \ge Z}

{\displaystyle X \le Z}

{\displaystyle -X \le Z}

{\displaystyle Y = 0, 1}

Again, {\textstyle N} is a large constant, {\textstyle Z} is a replacement variable for {\textstyle |X|} in the objective function, and {\textstyle Y} is a binary variable. The first two constraints ensure that one and only one constraint is active while the other will be automatically satisfied, following the same logic as above. The third and fourth constraints ensure that {\textstyle Z} must be equal to {\textstyle |X|} and has either a positive or negative value. For instance, for the case of {\textstyle Y = 0}, the new constraints will resolve to:

{\displaystyle X \ge Z}

{\displaystyle -X + N \ge Z}

{\displaystyle X \le Z}

{\displaystyle -X \le Z}

As {\textstyle N} is sufficiently large ({\textstyle N} must be at least {\textstyle 2|X|} for this approach), the second constraint must be satisfied. Since {\textstyle Z} is non-negative, the fourth constraint must also be satistfied. The remaining constraints, {\textstyle X \ge Z} and {\textstyle X \le Z} can only be satisfied when {\textstyle Z = X} and is of non-negative signage. Together, these constraints will allow for the selection of the largest {\textstyle |X|} for maximization problems (or smallest for minimization problems).

Numerical Example

We replace the absolute value quantities with a single variable:

We must introduce additional constraints to ensure we do not lose any information by doing this substitution:

The problem has now been reformulated as a linear programming problem that can be solved normally:

The optimum value for the objective function is , which occurs when and and .

Applications

There are no specific applications to Optimization with Absolute Values however it is necessary to account for at times when utilizing the simplex method.

Consider the problem Ax=b; max z= x c,jx,i. This problem cannot, in general, be solved with the simplex method. The problem has a simplex method solution (with unrestricted basis entry) only if c, are nonpositive (non-negative for minimizing problems).

The primary application of absolute-value functionals in linear programming has been for absolute-value or L(i)-metric regression analysis. Such application is always a minimization problem with all C(j) equal to 1 so that the required conditions for valid use of the simplex method are met.

By reformulating the original problem into a Mixed-Integer Linear Program (MILP), in most case we should be able to use GAMS/Pyomo/JuliaOPT to solve the problem.

- Application in Financial: Portfolio Selection

Under this topic, the same tricks played in the Numerical Example section to perform Reduction to a Linear Programming Problem will be applied here again, to reform the problem into a MILP in order to solve the problem. An example is given as below.

A portfolio is determined by what fraction of one's assets to put into each investment. It can be denoted as a collection of nonnegative numbers xj, where j = 1, 2,...,n. Because each xj stands for a portion of the assets, it sums to one. In order to get a highest reward through finding a right mix of assets, let , the positive parameter, denote the importance of risk relative to the return, and Rj denote the return in the next time period on investment j, j = 1, 2,..., n. The total return one would obtain from the investment is . The expected return is . And the Mean Absolute Deviation from the Mean (MAD) is .

maximize

subject to

where

Very obviously, this problem is not a linear programming problem yet. Similar to the numerical example showed above, the right thing to do is to replace each absolute value with a new variable and impose inequality constraints to ensure that the new variable is the appropriate absolute value once an optimal value is obtained. To simplify the program, an average of the historical returns can be taken in order to get the mean expected return: . Thus the objective function is turned into:

Now, replace with a new variable and thus the problem can be rewrote as:

maximize

subject to . t = 1, 2,...,T

where

. j = 1, 2,...,n

. t = 1, 2,...,T

So finally, after some simplifications methods and some tricks applied, the original problem is converted into a linear programming which is easier to be solved further.

Conclusion

The presence of an absolute value within the objective function prevents the use of certain optimization methods. Solving these problems requires that the function be manipulated in order to continue with linear programming techniques like the simplex method.

References

- "Absolute Values." lp_solve, http://lpsolve.sourceforge.net/5.1/absolute.htm. Accessed 20 Nov. 2020.

- Optimization Methods in Management Science / Operations Research. Massachusetts Institute of Technology, Spring 2013, https://ocw.mit.edu/courses/sloan-school-of-management/15-053-optimization-methods-in-management-science-spring-2013/tutorials/MIT15_053S13_tut04.pdf. Accessed 20 Nov. 2020.

- https://www.ise.ncsu.edu/fuzzy-neural/wp-content/uploads/sites/9/2019/08/LP-Abs-Value.pdf

- Vanderbei R.J. (2008) Financial Applications. In: Linear Programming. International Series in Operations Research & Management Science, vol 114. Springer, Boston, MA. https://doi.org/10.1007/978-0-387-74388-2_13