RMSProp

Author: Jason Huang (SysEn 6800 Fall 2020)

Introduction

RMSProp, root mean square propagation, is an optimization algorithm/method designed for Artificial Neural Network (ANN) training. And it is an unpublished algorithm first proposed in the Coursera course “Neural Network for Machine Learning” lecture six by Geoff Hinton. RMSProp lies in the realm of adaptive learning rate methods, which have been growing in popularity in recent years because it is the extension of Stochastic Gradient Descent (SGD) algorithm, momentum method, and the foundation of Adam algorithm. One of the applications of RMSProp is the stochastic technology for mini-batch gradient descent.

Theory and Methodology

Perceptron and Neural Networks

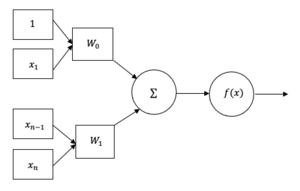

Perceptron is an algorithm used for supervised learning of binary classifier, and also can be regard as the simplify version/single layer of the Artificial Neural Network (ANN) to better understanding the neural network, which function is to imitate the human brain and conscious center function in Artificial Intelligence(AI) and present the small unit behavior in neural system when human thinking. The basis form of the perceptron consists inputs, weights, bias, net sum and activation function.

The process of the perceptron is started by initiating input value and multiplying them by their weights to obtain . All of the weights will be added up together to create the weight sum. And the weighted sum is then applied to the activation function to produce the perceptron's output.

A neural network works similarly to the human brain’s neural network. A “neuron” in a neural network is a mathematical function that collects and classifies information according to a specific architecture. A neural network contains layers of interconnected nodes, which can be regards as the perception and is similar to the multiple linear regression. The perceptron transfers the signal by a multiple linear regression into an activation function which may be nonlinear.

RProp

RProp, or we call Resilient Back Propagation, is the widely used algorithm for supervised learning with multi-layered feed-forward networks. The basic concept of the backpropagation learning algorithm is the repeated application of the chain rule to compute the influence of each weight in the network with respect to an arbitrary error. The derivatives equation of error function can be represented as:

Where is the weight from neuron to neuron , is the output , and is the weighted sum of the inputs of neurons . Once the weight of each partial derivatives is known, the error function can be presented by performing a simple gradient descent:

The choice of the learning rate , which scales the derivative, has an important effect on the time needed until convergence is reached. If it is set too small, too many steps are needed to reach an acceptable solution; on the contrary, a large learning rate will possibly lead to oscillation, preventing the error to fall below a certain value7.

In addition, RProp can combine the method with momentum method, to prevent above problem and to accelerate the convergence rate, the equation can rewrite as:

However, It turns out that the optimal value of the momentum parameter in above equation is equally problem dependent as the learning rate , and that no general improvement can be accomplished. Besides, RProp algorithm is not function well when we have very large datasets and need to perform mini-batch weights updates. Therefore, scientist proposal a novel algorithm, RMSProp, which can cover more scenarios than RProp.

RMSProp

RProp algorithm does not work for mini-batches is because it violates the central idea behind stochastic gradient descent, when we have a small enough learning rate, it averages the gradients over successive mini-batches. To solve this issue, consider the weight, that gets the gradient 0.1 on nine mini-batches, and the gradient of -0.9 on tenths mini-batch, RMSProp did force those gradients to roughly cancel each other out, so that the stay approximately the same when computing.

By using the sign of gradient from RProp algorithm, and the mini-batches efficiency, and averaging over mini-batches which allows combining gradients in the right way. RMSProp keep moving average of the squared gradients for each weight. And then we divide the gradient by square root the mean square.

The updated equation can be performed as:

where is the moving average of squared gradients, is gradient of the cost function with respect to the weight, is the learning rate and is moving average parameter (default value — 0.9, to make the sum of default gradient value 0.1 on nine mini-batches and -0.9 on tenths is approximate zero, and the default value is 0.001 as per experience).

Numerical Example

For the simple unconstrained optimization problem :

settle = 0.9, = 0.4, , and transform the optimization problem to the standard RMSProp form, the equations are presented as below:

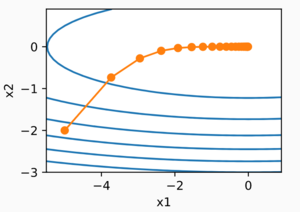

while using programming language to help us to visualize the trajectory of RMSProp algorithm, we can observe that the curve converge to a certain point. For this particular question, minimize solution will be obtained where is .

Applications and Discussion

The applications of RMSprop concentrate on the optimization with complex function like the neural network, or the non-convex optimization problem with adaptive learning rate, and widely used in the stochastic problem. The RMSprop optimizer restricts the oscillations in the vertical direction. Therefore, we can increase the learning rate or the algorithm could take larger steps in the horizontal direction converging to faster the similar approach gradient descent algorithm combine with momentum method.

In the first visualization scheme, the gradients based optimization algorithm has a different convergence rate. As the visualizations are shown, without scaling based on gradient information algorithms are hard to break the symmetry and converge rapidly. RMSProp has a relative higher converge rate than SGD, Momentum, and NAG, beginning descent faster, but it is slower than Ada-grad, Ada-delta, which are the Adam based algorithm. In conclusion, when handling the large scale/gradients problem, the scale gradients/step sizes like Ada-delta, Ada-grad, and RMSProp perform better with high stability.

Ada-grad adaptive learning rate algorithms that look a lot like RMSProp. Ada-grad adds element-wise scaling of the gradient-based on the historical sum of squares in each dimension. This means that we keep a running sum of squared gradients, and then we adapt the learning rate by dividing it by the sum to get the result. Considering the concepts in RMSProp widely used in other machine learning algorithms, we can say that it has high potential to coupled with other methods such as momentum,...etc.

Conclusion

RMSProp, root mean squared propagation is the optimization machine learning algorithm to train the Artificial Neural Network (ANN) by different adaptive learning rate and derived from the concepts of gradients descent and RProp. Combining averaging over mini-batches, efficiency, and the gradients over successive mini-batches, RMSProp can reach the faster convergence rate than the original optimizer, but lower than the advanced optimizer such as Adam. As knowing the high performance of RMSProp and possibility of combining with other algorithm, harder problem could be better described and converged in the future.

Reference

1. A. Radford, "Visualizing Optimization Algos (open sourse)"

2. R. Yamashita, M Nishio and R KGian, "Convolutional neural networks: an overview and application in radiology", pp. 9:611–629, 2018

3. V. Bushave, "Understanding RMSprop — faster neural network learning", 2018.

4. V. Bushave, "How do we ‘train’ neural networks ?", 2017.

5. S. Ruder, "An overview of gradient descent optimization algorithms" ,2016.

6. R. Maksutov, "Deep study of a not very deep neural network. Part 3a: Optimizers overview", 2018.

7. M. Riedmiller, H Braun, "A Direct Adaptive Method for Faster Back-propagation Learning: The RPROP Algorithm", pp.586-591, 1993.

8. D. Garcia-Gasulla, "An Out-of-the-box Full-network Embedding for Convolutional Neural Networks" pp.168-175, 2018.

9. Geoffrey Hinton, "Coursera Neural Networks for Machine Learning lecture 6", 2018

10. Python keras.optimizers.RMSprop() Examples

11. RMSProp Algorithm Implementation Example

12. S.De, A. Mukherjee, and E. Ullah, "Convergence guarantees for RMSProp and Adam in non-convex optimization and and empirical comparison to Nesterov acceleration", conference paper at ICLR, 2019

=\beta E[g^{2}](t-1)+(1-\beta )({\frac {\partial c}{\partial w}})^{2}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/7b6799b3b78c1a1a89345c9ce27e3513a12d15ad)

![{\displaystyle w_{ij}(t)=w_{ij}(t-1)-{\frac {\eta }{\sqrt {E[g^{2}]}}}{\frac {\partial c}{\partial w_{ij}}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/1480ef765e74e5d3817b7ca131ea4a8ad5a9a93c)

![{\displaystyle E[g]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/c0c4d6ea8063c3234e530a440078ab4418dff5c0)