Simplex algorithm: Difference between revisions

No edit summary |

No edit summary |

||

| Line 20: | Line 20: | ||

With the following constraints: | With the following constraints: | ||

<math> \begin{align} s.t. \quad \sum_{j=1}^n a_{ij}x_j &\leq b_i | <math> \begin{align} s.t. \quad \sum_{j=1}^n a_{ij}x_j &\leq b_i \quad i = 1,2,...,m \\ | ||

x_j &\geq 0 | x_j &\geq 0 \quad j = 1,2,...,n \end{align} </math> | ||

The first step of the simplex method is to add slack variables and symbols which represent the objective functions: | The first step of the simplex method is to add slack variables and symbols which represent the objective functions: | ||

<math> \begin{align} \phi &= \sum_{i=1}^n c_nx_n\\ | <math> \begin{align} \phi &= \sum_{i=1}^n c_nx_n\\ | ||

z_i &= b_i - \sum_{j=1}^n a_{ij}x_j | z_i &= b_i - \sum_{j=1}^n a_{ij}x_j \quad i = 1,2,...,m \end{align} </math> | ||

The new introduced slack variables may be confused with the original values. Therefore, it will be convenient to add those slack variables to the end of the list of ''x''-variables with the following expression: | The new introduced slack variables may be confused with the original values. Therefore, it will be convenient to add those slack variables to the end of the list of ''x''-variables with the following expression: | ||

<math> \begin{align} \phi &= \sum_{j=1}^n c_nx_n\\ | <math> \begin{align} \phi &= \sum_{j=1}^n c_nx_n\\ | ||

x_{n+i} &= b_i - \sum_{j=1}^n a_{ij}x_{ij} | x_{n+i} &= b_i - \sum_{j=1}^n a_{ij}x_{ij} \quad i=1,2,...,m \end{align} </math> | ||

With the progression of simplex method, the starting dictionary (which is the equations above) switches between the dictionaries in seeking for optimal values. Every dictionary will have ''m'' basic variables which form the feasible area, as well as ''n'' non-basic variables which compose the objective function. Afterward, the dictionary function will be written in the form of: | With the progression of simplex method, the starting dictionary (which is the equations above) switches between the dictionaries in seeking for optimal values. Every dictionary will have ''m'' basic variables which form the feasible area, as well as ''n'' non-basic variables which compose the objective function. Afterward, the dictionary function will be written in the form of: | ||

| Line 38: | Line 38: | ||

<math> \begin{align} | <math> \begin{align} | ||

\phi &= \bar{\phi} + \sum_{j=1}^n \bar{c_n}x_n\\ | \phi &= \bar{\phi} + \sum_{j=1}^n \bar{c_n}x_n\\ | ||

x_{n+i} &= \bar{b_i} - \sum_{j=1}^n \bar{a_{ij}}x_{ij} | x_{n+i} &= \bar{b_i} - \sum_{j=1}^n \bar{a_{ij}}x_{ij} \quad i=n+1,n+2,...,n+m | ||

\end{align} </math> | \end{align} </math> | ||

| Line 45: | Line 45: | ||

The ''leaving variables'' are defined as which go from basic to non-basic. The reason of their existence is to ensure the non-negativity of those basic variables. Once the entering variables are determined, the corresponding leaving variables will change accordingly from the equation below: | The ''leaving variables'' are defined as which go from basic to non-basic. The reason of their existence is to ensure the non-negativity of those basic variables. Once the entering variables are determined, the corresponding leaving variables will change accordingly from the equation below: | ||

<math> x_i = \bar{b_i} - \bar{a_{ik}}x_k | <math> x_i = \bar{b_i} - \bar{a_{ik}}x_k \quad i \, \epsilon \, \{ 1,2,...,n \}</math> | ||

Since the non-negativity of entering variables should be ensured, the following inequality can be derived: | Since the non-negativity of entering variables should be ensured, the following inequality can be derived: | ||

<math> \bar{b_i} - \bar{a_i}x_k \geq 0 | <math> \bar{b_i} - \bar{a_i}x_k \geq 0 \quad i\,\epsilon\, \{1,2,...,n\}</math> | ||

Where ''x<sub>k</sub>'' is immutable. The minimum ''x<sub>i</sub>'' should be zero to get the minimum value since this cannot be negative. Therefore, the following equation should be derived: | Where ''x<sub>k</sub>'' is immutable. The minimum ''x<sub>i</sub>'' should be zero to get the minimum value since this cannot be negative. Therefore, the following equation should be derived: | ||

| Line 57: | Line 57: | ||

Due the nonnegativity of all variables, the value of x<small>k</small> should be raised to the largest of all of those values calculated from above equation. Hence, the following equation can be derived: | Due the nonnegativity of all variables, the value of x<small>k</small> should be raised to the largest of all of those values calculated from above equation. Hence, the following equation can be derived: | ||

<math> x_k = \min_{\bar{a_{ik}}>0} \, \frac{\bar{b_i}}{\bar{a_{ik}}} | <math> x_k = \min_{\bar{a_{ik}}>0} \, \frac{\bar{b_i}}{\bar{a_{ik}}} \quad i=1,2,...,n</math> | ||

Once the leaving-basic and entering-nonbasic variables are chosen, reasonable row operation should be conducted to switch from current dictionary to the new dictionary, as this step is referred as ''pivot'' | Once the leaving-basic and entering-nonbasic variables are chosen, reasonable row operation should be conducted to switch from current dictionary to the new dictionary, as this step is referred as ''pivot'' | ||

Revision as of 03:48, 18 November 2020

Author: Guoqing Hu (SysEn 6800 Fall 2020)

Steward: Allen Yang, Fengqi You

Introduction

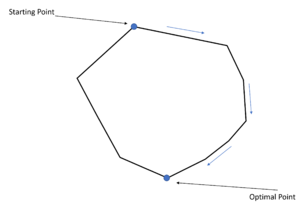

Simplex algorithm (or Simplex method) is a widely-used algorithm to solve the Linear Programming(LP). Simplex algorithm can be thought as one of the elementary steps for solving the inequality problem, since many of those will be converted to LP and solved via Simplex algorithm[1]. Simplex algorithm has been proposed by George Dantzig, initiated from the idea of step by step downgrade to one of the vortices on the convex polyhedral[2]. "Simplex" could be possibly referred as the top vertex on the simplicial cone which is the geometric illustration of the constraints within Linear Problem[3].

Algorithmic Discussion[4]

There are two theorem in LP:

- The feasible region for any LP problem is a convex set (Every linear equation's second derivative is 0, implying the monotonicity of the trend). Therefore, if an LP has an optimal solution, there must be an extreme point of the feasible region that is optimal

- For any LP, there is only one extreme point of the LP's feasible region regarding to every basic feasible solution. Plus, there will be at minimum of one basic feasible solution corresponding to every extreme point in the feasible region.[4]

Based on two theorems above, the geometric illustration of the LP problem could be depicted. Each line of this polyhedral will be the boundary of the LP constraints, in which every vertex will be the extreme points according to the theorem. Simplex method is the way to reasonably sort out the shortest path to the optimal solutions.

Consider the following expression as the general linear programming problem standard form:

With the following constraints:

The first step of the simplex method is to add slack variables and symbols which represent the objective functions:

The new introduced slack variables may be confused with the original values. Therefore, it will be convenient to add those slack variables to the end of the list of x-variables with the following expression:

With the progression of simplex method, the starting dictionary (which is the equations above) switches between the dictionaries in seeking for optimal values. Every dictionary will have m basic variables which form the feasible area, as well as n non-basic variables which compose the objective function. Afterward, the dictionary function will be written in the form of:

Where the variables with bar suggest that those corresponding values will change accordingly with the progression of simplex method. The observation could be made that there will specifically one variable goes from non-basic to basic and one acts oppositely. As this kind of variable is referred as entering variable. Under the goal of increasing Φ, the entering variables are selected from the set {1,2,...,n}. As long as there are no repetitive entering variables can be selected, the optimal values will be found. The decision of which entering variable should be selected at first place should be made based on the consideration that there usually are multiple constraints (n>1). For Simplex algorithm, the coefficient with least value is preferred since the major objective is maximization.

The leaving variables are defined as which go from basic to non-basic. The reason of their existence is to ensure the non-negativity of those basic variables. Once the entering variables are determined, the corresponding leaving variables will change accordingly from the equation below:

Since the non-negativity of entering variables should be ensured, the following inequality can be derived:

Where xk is immutable. The minimum xi should be zero to get the minimum value since this cannot be negative. Therefore, the following equation should be derived:

Due the nonnegativity of all variables, the value of xk should be raised to the largest of all of those values calculated from above equation. Hence, the following equation can be derived:

Once the leaving-basic and entering-nonbasic variables are chosen, reasonable row operation should be conducted to switch from current dictionary to the new dictionary, as this step is referred as pivot