Trust-region methods

Author: Tung-Yen Wang (tw565) (CHEME 6800, Fall 2024)

Stewards: Nathan Preuss, Wei-Han Chen, Tianqi Xiao, Guoqing Hu

Introduction

Trust region methods are iterative optimization techniques designed to find local minima or maxima of objective functions, particularly in nonlinear problems (NLP), by iteratively refining approximations within dynamically adjusted trust regions[1]. Unlike line search methods, which determine the step size along a predefined direction, trust region methods concurrently optimize both the direction and the magnitude of the step within a specified neighborhood around the current iterate.

The origins of these methods date back to Levenberg, who introduced a modified version of the Gauss-Newton method for solving nonlinear least squares problems by adding a damping term to control the step size. This approach was later rediscovered and refined by Morrison and Marquardt, ultimately gaining prominence as the Levenberg-Morrison-Marquardt method [2]. Subsequent advancements led to the development and standardization of trust region methods, further improving their robustness and application.

The central core of trust region methods lies in the idea of constructing a simplified model — often a quadratic approximation — that represents the objective function near the current point. This model serves as a surrogate, guiding the search for the optimum within a bounded region around the current estimate. The size of the trust region is dynamically adjusted based on how well the model predicts the actual behavior of the objective function. If the model is predicting accurate behavior of the objective function, the size of the trust region is increased, allowing the algorithm to explore a larger area around the current solution; the trust region is reduced if otherwise [3].

Algorithm Discussion

The mathematical framework involved in trust region methods starts with the construction of a local approximation of the objective function and defining a constrained region within which this model is trusted to be accurate. Consider the following local quadratic approximation of $ f $ around $ x_k $:

(1) $ m_k(p) = f_k + \nabla f_k^T p + \frac{1}{2} p^T B_k p $

where $ p $ represents the step or direction vector from the current point, $ f_k=f(x_k) $, $ \nabla f_k = \nabla f(x_k) $, and $ B_k $ is a symmetric matrix.

The first two terms $ m_k(p) $ are designed to match the first two terms of the Taylor series expansion of $ f(x_k+p) $. The expression can also be written as:

$ m_k(p) = f_k + \nabla f_k^T p + O(||p||^2) $

where higher-order terms $ O(||p||^2) $ are approximated using $ B_k $. It can also be noted that the difference between $ m_k(p) $ and $ f(x_k+p) $ is of order $ O(||p||^2) $, suggesting that when p is small, the approximation error is minimal.

For each iteration, seek a solution of the subproblem:

(2) $ m_k(p) = f_k + \nabla f_k^T p + \frac{1}{2} p^T B_k p $ $ s.t. ||p|| \leq \Delta_k $

where $ \Delta_k $ represents the trust region radius and is greater than 0.

Here, $ p_k $ represents the step taken at iteration $ k $, hence the next point calculated can be denoted as $ x_k+1=x_k+p $. For the moment, $ || \cdot || $ is the Euclidean norm.

To solve the subproblem, the trust region radius $ k $ must be determined at each iteration.

(3) $ p_k=\frac{f(x_k) - f(x_k+p_k)}{m_k(0) - m_k(p_k)} = \frac{actual\; reduction}{predicted\; reduction} $

If $ p_k $ is negative, the objective value $ f(x_k+p) $ would be greater than the current value $ f(x_k) $, leading to the rejection of the step. If $ p_k $ is positive but not close to 1, the trust region remains unchanged. If $ p_k $ is positive but close to 0, the trust region radius $ \Delta_k $ is reduced. On the other hand, if $ p_k $is approximately equal to 1, the trust region radius $ \Delta_k $ is expanded for the next point iteration [1].

Cauchy Point

Identical to line search, there is no need to compute the subproblem (2) to achieve optimal minimum as the model improves itself with each iteration, meaning that as long as the step lies in the trust region and provides sufficient reduction, the algorithm can converge to the best solution. This sufficient reduction can be expressed as the Cauchy Point, that is:

(4) $ p_k^C =-\tau_k\frac{\Delta_k}{||\nabla f_k||} $

where

$ \tau_k= \begin{cases} 1, & \text{if }\nabla f_k^TB_k\nabla f_k \leq 0\\ min(\frac{||\nabla f_k||^3}{\Delta _k\nabla f_k^TB_k\nabla f_k}, 1), & \text{if otherwise} \end{cases} $

Computations often start with this as it is simple to calculate. To achieve better performance, however, it is necessary to integrate information about $ B_k $ to refine $ p_k $. Consider the following:

$ p_B= -B_k^{-1} \nabla f_k $

as the full Newton step where $ ||p_k||\leq \Delta_k $.

The Dogleg Method

The full Newton step, however, is not feasible when the trust region constraint is active. To handle such cases, the Dogleg method is used to approximate the subproblem (2). This method finds a solution that considers two line segments. The first line is the steepest descent direction:

$ p^U =-\frac{g^Tg}{g^TB_g}g $

where $ g= \nabla f(x_k) $.

The second line runs from $ p^U $ to $ p^B $ for $ \tau\in [0,2] $:

$ p(\tau)= \begin{cases} \tau p^U & 0\leq \tau\leq 1\\ p^U+(\tau -1)(p^B-p^U) & 1\leq \tau\leq 2\end{cases} $

where $ p^B= -B^{-1}f(x_k) $.

The method works well when $ B_k $ is positive definite. However, when $ B_k $ is not convex, $ p_B $ cannot guaranteed to be a meaningful solution since the method is not well-suited to handle negative $ B_k $.

The Conjugated Gradient Steihaug’s Method

In contrast to the methods above, Steihaug’s method is designed to better handle large-scale trust region subproblems. It mitigates the limitations of slower convergence and the need for a positive definite Hessian. In addition, it handles negative curvature by enabling early termination when such directions are detected or when the trust region boundary is reached. These features make the method more scalable and robust, suited for large-scale or poorly conditioned optimization problems [4]. The method is described as follows:

given $ \varepsilon > 0 $

set $ p_0 = 0, r_0= g, d_0=-g,j=0 $;

if $ ||r_0||< \varepsilon $

- return $ p_0 = p_j $;

for all $ j $,

- if $ d_j^TBd_j\leq0 $

- determine $ \tau $ such that $ p=p_j+\tau d_j $ minimizes (2) and satisfies $ ||p|| = \Delta $

- return $ p $;

- set $ \alpha _j =\frac{r_j^Tr_j}{d_j^TBd_j} $;

- set $ p_{j+1} = \alpha _jd_j $;

- if $ ||p_{j+1}|| \geq \Delta $;

- determine the positive value of $ \tau $ such that $ p=p_j+\tau d_j $ and satisfies $ ||p|| = \Delta $

- return $ p $;

- set $ r_{j+1}=r_j+\alpha_jBd_j $;

- if $ ||r_{j+1}|| \leq \varepsilon $

- return $ p=p_{j+1} $;

- set $ \beta_{j+1}=\frac{r_{j+1}^Tr_{j+1}}{r_{j+1}r_j} $;

- set $ d_{j+1}= -r_{j+1}+\beta_{j+1}d_j $;

end (for)

The initialization of $ p_0 = 0 $ is fundamental, as the Cauchy Point is obtained right after in $ p_1 $; this condition guarantees global convergence.

Termination Criteria

The convergence of a trust region algorithm (1) depends on the accuracy of the approximate solution to the subproblem (2). It is desirable that the subproblem is approximated rather than solved exactly, provided the approximation guarantees the algorithm’s convergence [5]. Fletcher proposes that the algorithm terminates when either of the following are small [6]:

- $ f(x_k)-f(x_{k+1}) $

- $ x_k-x_{k+1} $

- $ \nabla f(x_k) $

These criteria ensure that the algorithm terminates efficiently while maintaining convergence.

In addition, termination can be considered when $ \Delta_k $ is small as it is possible for $ \Delta_k $ to converge to 0 when $ k $ approaches infinity.

Numerical example

Example One

This section introduces trust region methods and demonstrates their application using a simple function as a numerical example. Consider the following:

$ f(x)=(x-2)^2 $

Our goal is to minimize this function, and clearly, the solution is $ x=2 $ where $ f(2)=0 $.

The gradient is $ f'(x)=2(x-2) $ and the Hessian is $ f''(x)=2 $.

Iteration 1:

To calculate, begin the initial point at $ x_0=0 $ and a trust region radius as $ 1.0 $. From this initial point, the following is obtained:

Step 1: evaluate the current point:

$ f(x_0)=(0-2)^2=4 $

Gradient: $ f'(x_0)=2(0-2)=-4 $

Hessian: $ 2 $

Step 2: form the quadratic model

$ m_0(p) = f(x_0) + \nabla f_0^T p + \frac{1}{2} p^T H_0 = m_0(p) = 4 + (-4) p + \frac{1}{2} p^2(2)=4-4p+p^2 $

Step 3: solve the subproblem

$ m_0'(p)=-4+2p=0 $ $ p=2 $

However, $ p=2 $ is outside the trust region radius $ 1.0 $. Hence, $ |p|\leq 1 $ will be utilized to find the best step.

$ m_0(1)=4-4(1)+1^2=1 $

$ m_0(-1)=4-4(-1)+(-1)^2=9 $

The lower value is $ p=1 $

Step 4: check function reduction

Proposed new point: $ x_1=x_0+p=0+1=1 $

Actual function at new point: $ f(1)=(1-2)^2=1 $

Step 5: compute ratio

Actual reduction: $ f(x_0)-f(x_1)=4-1=3 $

Prediction reduction: $ f(x_0)-m_0(1)=4-1=3 $

Hence $ p=\frac{3}{3}=1 $

Step 6: update trust region and accept/reject step

Acceptant criteria for the ratio are typically as follows (may vary):

If $ p<0.25 $, reduce the trust region radius, if $ p>0.75 $, expand the trust region radius.

In the example, $ p $ is greater than $ p>0.75 $, thus the trust region should be expanded and the step is accepted. Suppose the trust region radius is doubled (i.e. to $ 2.0 $).

Iteration 2:

The point now is at $ x_1=1 $ and a radius as $ 2.0 $. From this point, the following is obtained:

Step 1: evaluate the current point:

$ f(1)=1 $

Gradient: $ f'(1)=2(1-2)=-2 $

Hessian: $ 2 $

Step 2: form the quadratic model

$ m_1(p) = f(1) + \nabla f_0^T p + \frac{1}{2} p^TH_1= 1-2p+p^2 $

Step 3: solve the subproblem

$ m_1'(p)= -2+2p =0 $ $ p=1 $

$ p\leq 2 $, then $ p=1 $ is valid

Step 4: check function reduction

Proposed new point: $ x_2=x_1+1=2 $

Actual function at new point: $ f(2)=(2-2)^2=0 $

Step 5: compute ratio

Actual reduction: $ f(1)-f(2)=1-0=1 $

Prediction reduction: $ f(1)-m_1(1)=1-0=1 $

Hence $ p=\frac{1}{1}=1 $

Step 6: update trust region and accept/reject step

In the example, since $ p $ is greater than $ p>0.75 $, the proposed step is accepted. By taking this step, the problem reaches the exact minimum is reached at $ x=2 $, and therefore terminates.

Example Two - Himmulblau’s Function

The following demonstrates a more complex example of trust region methods. Himmulblau’s function is a well-known mathematical function used extensively in optimization testing and research [7]. It is defined as two real variables and is characterized by having multiple global minima. The function is non-convex and multimodal, making it an excellent candidate for demonstrating the effectiveness of optimization algorithms. The following uses Steihaug’s method.

The function is defined as the following:

$ f(x,y)=(x^2+y-11)^2+(x+y^2-7)^2 $

where $ x $ and $ y $ are real numbers.

The four global minima of the function are at:

- $ (3.0,2.0) $

- $ (-2.805, 3.131) $

- $ (-3.779,-3.283) $

- $ (3.584,-1.848) $

Due to the complexities in the calculations involved in the trust region method algorithm, the minimize function with the 'trust-constr' method from the SciPy library in Python can be utilized to solve this problem. Consider the following implementation:

minimize(method=’trust-constr’)

Iteration 1:

To calculate, begin the optimization with the initial guess as $ (-4.0, 0) $ and trust region radius as $ 1.0 $. The optimal solution achieved from this iteration is $ (-3.382, -0.786) $, yield a function value of $ 95.453 $. The initial point gives a sufficient prediction, thus, increase the trust region radius to $ 7.0 $.

Iteration 2:

In this iteration, the updated point $ (-4.0, 0) $ and radius $ 7.0 $ are used to find a new step. However, an insufficient prediction was obtained, leading to a decrease in the trust region radius to $ 0.7 $. The optimal solution obtained from the previous iteration is retained for the next step.

Iteration 3:

With the updated point $ (-3.382, -0.786) $ trust region radius $ 0.7 $, a new point at $ (-3.072, -1.413) $ was obtained.

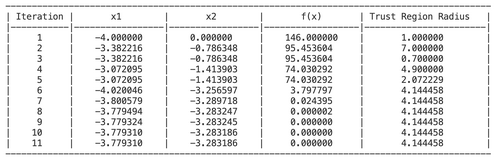

The following shows the remaining iterations:

The convergence achieved at iteration 11 with $ x=-3.779,y= -3.283 $ and $ f(x) = 0 $.

Applications

Trust region methods are applied in optimization problems across various domains due to their robustness, adaptability, and efficiency in handling complex constraints. Their ability to balance local and global optimization strategies makes them invaluable in solving high-dimensional, nonlinear, and constrained problems. These application areas include:

Engineering

Trust region methods are widely used in engineering, particularly in structural optimization, to improve the stability and efficiency of design processes. These methods address challenges in high-dimensional design problems by iteratively refining solutions with defined trust regions,making them effective for complex objectives such as optimization of material distribution, minimizing stress, or improving flow geometries. For instance, Yano et al. [8] introduce a globally convergent method to accelerate topology optimization with trust region frameworks. This approach significantly reduces computational costs while maintaining accuracy in optimal design solutions.

Finance

Trust region methods are also increasingly applied in the financial sector as these methods ensure stable and reliable convergence even when dealing with complex, non-linear and volatile financial data. For example, in the study by Phua et al. [9], trust region methods were employed during the optimization of neural network training. This approach significantly improved the forecasting accuracy of major stick indices like DAX and NASDAQ by effectively managing high-dimensional and noisy financial datasets.

Machine Learning

Algorithms like ACKTR (Actor-Critic using Kronecker-Factored Trust Region) use trust regions to improve training stabilities and sample efficiencies in deep reinforcement learning. Specifically, ACKTR uses an approximation of the natural gradient to maintain a trust region during updates, preventing large and unstable parameter changes. This benefits facilitation of applications in robotics and autonomous systems [10].

Conclusion

Trust region methods provide a robust and efficient framework for solving optimization problems through the construction of a region around the current solution where an accurate simplified model approximates the objective function. This approach ensures convergence, even for nonlinear and complex problems encountered in fields such as engineering. The algorithm’s advantage lies in its adaptability, dynamically adjusting the trust region based on the accuracy of the model’s predictions, thereby ensuring global and, in some cases, superlinear convergence [11]. Future research directions may involve improving the scalability of trust region methods for high-dimensional problems, integrating them with machine learning frameworks for better predictive modeling [12], and developing more efficient techniques to handle constraints and uncertainties in real-world applications.

References

- ↑ Nocedal, J., & Wright, S. J. (2006). Numerical optimization. Springer.

- ↑ Yuan, Y. (2015b). Recent advances in trust region algorithms. Mathematical Programming, 151(1), 249–281. https://doi.org/10.1007/s10107-015-0893-2

- ↑ Conn, A. R., Gould, N. I. M., & Toint, P. L. (2000). Trust-region methods. Siam.

- ↑ Grippo, L., & Sciandrone, M. (2023). Introduction to Methods for Nonlinear Optimization. In Unitext. Springer International Publishing. https://doi.org/10.1007/978-3-031-26790-1

- ↑ Dutta, S. (2016). Optimization in Chemical Engineering. Cambridge University Press.

- ↑ Fletcher, R. (1987). Practical Methods of Optimization (2e), John Wiley and Sons.

- ↑ Himmelblau, D. M. (2018). Applied nonlinear programming. McGraw-Hill.

- ↑ Yano, M., Huang, T., & Zahr, M. J. (2021). A globally convergent method to accelerate topology optimization using on-the-fly model reduction. Computer Methods in Applied Mechanics and Engineering, 375, 113635–113635. https://doi.org/10.1016/j.cma.2020.113635

- ↑ Phua, P. K. H., Xiaotian Zhu, & Chung Haur Koh. (n.d.). Forecasting stock index increments using neural networks with trust region methods. Proceedings of the International Joint Conference on Neural Networks, 2003. https://doi.org/10.1109/ijcnn.2003.1223354

- ↑ Wu, Y., Elman Mansimov, Grosse, R. B., Liao, S., & Ba, J. (2017). Scalable trust-region method for deep reinforcement learning using Kronecker-factored approximation. Neural Information Processing Systems, 30, 5279–5288.

- ↑ Zhang, J., Wu, L., & Zhang, X. (2007). A Trust Region Method for Optimization Problem with Singular Solutions. Applied Mathematics and Optimization, 56(3), 379–394. https://doi.org/10.1007/s00245-007-9009-6

- ↑ Lin, C.-J., Weng, R. C., & S. Sathiya Keerthi. (2008). Trust Region Newton Method for Logistic Regression. Journal of Machine Learning Research, 9(22), 627–650. https://doi.org/10.1145/1390681.1390703